For a long time, data loss prevention (DLP) lived in the margins of security programs. It was something teams deployed to satisfy a requirement or reduce obvious risk. A handful of policies, some visibility into network traffic, maybe a scan of cloud storage. That was usually enough.

That model reflected how work used to happen. Data moved more slowly, lived in fewer places, and followed more predictable paths. That is no longer true.

Today, data is constantly being copied, reshaped, and moved across SaaS applications, local devices, collaboration platforms, and increasingly, AI systems acting on behalf of users. A single workflow can span dozens of tools. A single user, working with AI copilots, can generate many versions of the same sensitive dataset in minutes.

In that environment, the question is no longer just where data is stored or how it moves across the network. The more important question is what actually happens to data at the moment it is used.

That is where endpoint DLP becomes essential.

The Endpoint Is Where Intent Becomes Action, and Where Risk Accelerates

There is still a tendency to think of the endpoint as a narrow surface area. Often that means a browser extension or a lightweight sensor tied to a specific application. That framing misses the point. The endpoint is not just a browser. It is the system where users and software act on data. It is where decisions are made and executed, and critically, it is where the highest concentration of data risk actually occurs.

Exfiltration does not happen in the cloud at rest. It happens when someone copies files to a USB drive, uploads a document to a personal cloud account, or forwards sensitive data through personal email. Insider threats do not announce themselves in network logs. Risk shows up in patterns of behavior such as unusual access, repeated exports, data being moved in ways that fall outside normal workflows. Unsanctioned AI tool usage does not register as a policy violation at the firewall. It happens the moment a user pastes proprietary information into an external chatbot or a third-party AI agent starts summarizing internal documents without oversight.

Consider a few common scenarios:

- A developer copies proprietary code into a personal repository

- A finance analyst pastes forecast data into an AI tool to summarize it

- A data scientist exports customer data to work on it locally

- An AI agent aggregates documents and posts a draft into a collaboration tool

None of these actions are confined to a single application or a clean, inspectable network flow. They move across desktop apps, browsers, local scripts, background processes, and AI tools running on the device.

And in each case, the risk crystallizes at the endpoint.

This is also why the endpoint provides the richest signal for understanding intent. When you can see what a user actually did with data (e.g. what they copied, where they sent it, how it was transformed), you can distinguish between legitimate work and genuinely risky behavior. Without that visibility, you are left inferring intent from fragments. You might know that data left the organization, but not why, how, or whether it mattered. That gap is not just an analytical problem. It is where investigations stall, where policy decisions get made on incomplete information, and where real incidents go undetected.

If your data security program is not operating at the endpoint, it is missing the place where most meaningful risk actually happens.

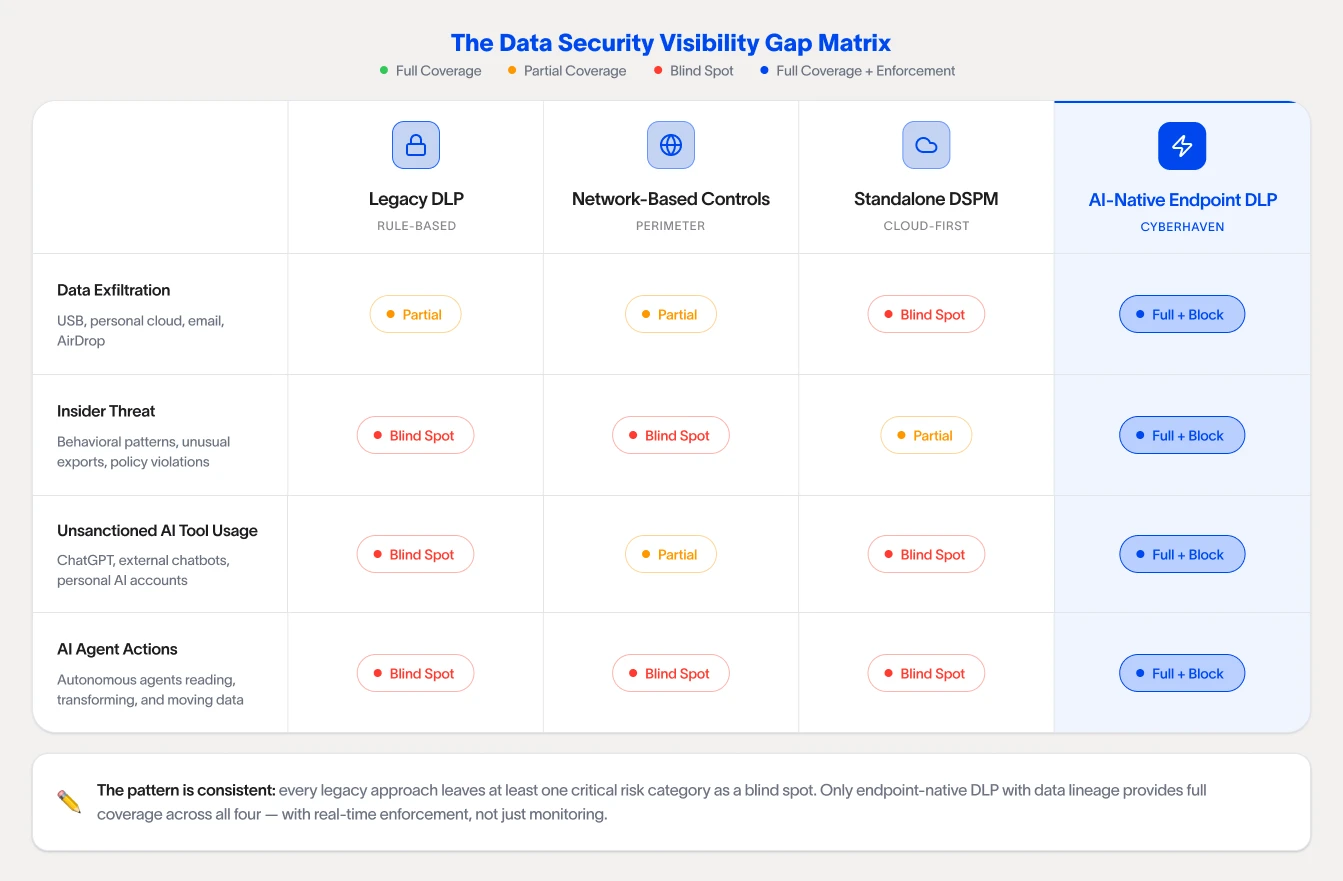

Why Legacy and Agentless Approaches Fall Short

Many existing, legacy DLP approaches were built around a different set of assumptions. They expect data movement to be visible, structured, and relatively slow. That is exactly why they struggle in modern environments.

Static rules generate noise instead of signal. When users are constantly reshaping data and moving between tools, rule-based systems cannot keep up. They fire on volume, not on context. A file copied to an approved cloud drive looks the same as one copied to a personal account. An analyst pasting data into an internal tool triggers the same alert as one pasting it into an external AI chatbot. Without understanding what the data is, where it came from, and what the user was actually doing, every action starts to look equally suspicious. The result is a flood of false positives that security teams have no reliable way to triage.

That alert fatigue is not a minor operational inconvenience. It is a structural failure. When analysts spend their time reviewing noise, real incidents get buried. Teams start tuning policies more aggressively to reduce volume, which means genuinely risky behavior gets suppressed along with the false alarms. Over time, the program becomes less effective precisely because it was generating too many alerts in the first place.

API-based approaches answer a different question from AI-native endpoint-based DLP. Connecting to SaaS applications via API can surface useful information about what data exists and where it lives. But an API integration is not the same as operating on an endpoint. It sees what an application is willing to report, at the moment the query runs. It does not observe what a user actually did with a file before it reached that application, what happened to it after, or what is happening right now on a device outside that application's scope. Treating API connectivity as endpoint visibility is a fundamental category error, one that leaves entire classes of risk unobserved.

Standalone DSPM vendors, again, only solve part of the data security problem. Standalone data security posture management tools have real value for understanding where sensitive data lives across cloud environments. But visibility into data at rest is not the same as visibility into data in motion. Standalone DSPM was not designed to observe how data is used on endpoints, enforce controls in real time, or integrate into the broader workflow of a security team responding to an active incident. More fundamentally, it does not meet security teams where they are, with their existing tech stack. A tool that only sees one slice of the environment cannot enhance a data security program, it just adds another console to manage and another blind spot to work around.

Together, these approaches share a common limitation: They were designed to observe data from the outside, at arm's length, through channels that were never built to capture the full picture.

The gaps they leave are not edge cases. They are the exact conditions under which modern data loss happens.

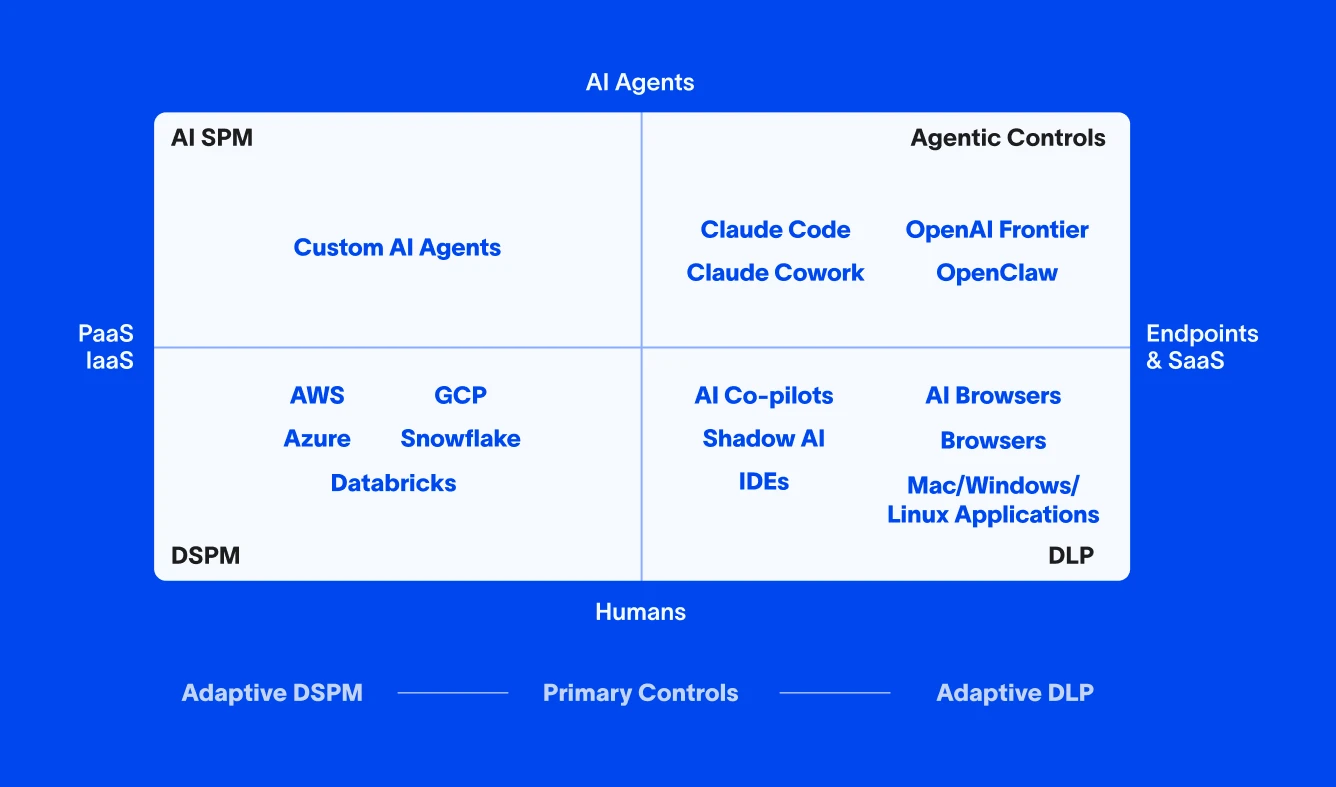

The graphic below illustrates why this matters. Moving from one quadrant to another is not a product decision. It is an architectural one. A vendor built around cloud visibility cannot simply add endpoint enforcement. A tool designed to monitor cannot simply become one that acts. The foundation determines what is possible, and most vendors in this space were built on the wrong one.

Cyera Only Sees the Cloud: Where Data Security Stops and Risk Begins

Cyera focuses first on finding and understanding sensitive data across cloud environments and SaaS applications. And the technology is good at that. But that strength is also a limitation. Cyera is a magnifying glass focused on a single blade of grass. Visibility is strong in the cloud, but it fades quickly once data starts to move. And in most organizations, data is always moving.

The deeper problem is that cloud-first visibility produces a static picture of a dynamic problem. Cyera's view of sensitive data reflects where it was, not necessarily where it is now or what has been done with it. By the time a scan surfaces a finding, the data may have already been downloaded, transformed, and shared across a half-dozen tools. That lag is not just an inconvenience. It means the context security teams need to act on is already out of date before anyone sees it.

When a user downloads a file and pastes it into Slack, email, or an external AI tool like ChatGPT, that chain of events largely disappears from Cyera's view. The platform sees the access, but it does not see what came next. And because it was not designed to operate on the endpoint, it cannot enforce controls at the moment of interaction. It can flag, it can report, but it cannot stop. For organizations that need to do more than monitor, that distinction matters enormously. Visibility without the ability to act is not data security. It is documentation of risk after the fact.

That gap in enforcement compounds into a gap in signal quality. Without endpoint context, without knowing what a user actually did with data, how it was transformed, and what it was combined with, cloud-only tools are forced to make judgments on incomplete information. The result is familiar for those utilizing Cyera or other standalone DSPM solutions: alerts that fire without enough context to act on, findings that cannot be confidently triaged, and security teams spending time investigating noise instead of real incidents. Partial visibility does not just leave gaps. It actively generates the kind of false positive volume that erodes confidence in the program over time.

The foundational questions that matter most in a real investigation cannot be answered from the cloud alone. Where did this data originate? Was it modified? Was it combined with other sensitive information? Was this action actually risky, or routine behavior taken out of context? Answering those questions requires visibility into what happened on the device, at the moment it happened. Without that, teams are left knowing where sensitive data lives in the cloud while remaining largely blind to how it is actually being used.

What It Takes to Do Endpoint DLP Well

Building an endpoint DLP capability that works in practice is not straightforward. Most teams underestimate the complexity until they are already deep into a deployment, and most vendors understate it for the same reason. There are three compounding challenges (disparate environments, instability across systems, the complexity of data lineage) that separate programs that work from programs that quietly erode over time.

The Environment Is Never Uniform

True AI-native endpoint DLP does not mean securing one device or even one configuration. It means operating across thousands of endpoints simultaneously, each running different operating systems, different OS versions, different hardware profiles, and different combinations of applications. A policy that behaves predictably on a fully patched macOS 14 device may behave entirely differently on a Windows machine running a legacy version in a regulated environment. Multiply that across an enterprise and you have not one endpoint problem. You have thousands of distinct environments that all need to be handled consistently.

That fragmentation does not just complicate deployment. It directly affects the quality of the data you receive. When endpoints behave differently, the telemetry they produce is inconsistent. Events get logged differently, actions get classified differently, and the same user behavior can surface as completely different signals depending on the device. Trying to build reliable, low-noise alerting on top of that kind of fragmented data is genuinely hard. It is a core reason why endpoint DLP programs struggle with false positive volume at scale. The problem is not just the detection logic. It is that the underlying data is inherently messy.

Agent Stability Is Where Most Vendors Quietly Fail

An endpoint agent operates directly on user devices, intercepting actions in real-time. That means any instability does not stay contained. It shows up as slowdowns when opening files, delays during uploads, and performance degradation during normal workflows. In a worst case scenario, it contributes to system crashes or conflicts with other security tools running on the same device. Users notice immediately, IT teams get flooded with complaints, and the program loses credibility before it has a chance to demonstrate value.

Modern operating systems make this harder, not easier. On macOS, Apple has deprecated kernel-level access in favor of frameworks like the Endpoint Security Framework. Windows has followed a similar path. Any endpoint DLP system has to work within these constraints, which limits certain approaches and raises the bar for engineering teams that want both deep visibility and stable operation. What matters is not how the agent performs in a controlled test environment. It is how it behaves at the moments when users are actively interacting with data, under real-world conditions, across a fragmented and unpredictable landscape.

The vendors that treat endpoint DLP as purely a detection problem tend to underinvest here. Doing it well requires treating the endpoint as a systems engineering challenge first, where stability, performance, and consistent behavior are the foundation that everything else depends on.

Why Data Lineage Changes the Equation

Even if you solve the scale and stability problems, you are still left with a more fundamental challenge: Seeing that data moved is not the same as understanding what that movement means. Raw endpoint visibility tells you that something happened. It does not tell you whether that action was risky.

Context is what closes that gap. By tracking how data is created, copied, modified, and shared over time, rather than treating each event in isolation, it becomes possible to build a continuous record of where data originated, how it has changed, and how it is actually being used. Endpoint telemetry captures what happens on the device, while integrations with SaaS applications fill in what happens beyond it.

That continuity is what enables precise decisions. It makes it possible to distinguish between a developer working within approved systems and one moving code into an unapproved environment, or between an analyst using an internal AI tool and one pasting sensitive data into an external chatbot. Without it, those scenarios look identical, and security teams are left making judgment calls on incomplete information.

Endpoint DLP in an AI-Driven Environment

The rise of AI changes the scale of the data security problem, and it continues to grow.

AI tools make it easier to generate new versions of data, summarize content, and move information between systems. They also introduce new forms of automation, where agents can act on data with limited oversight.

This increases both the volume of data being handled and the speed at which it moves, quickly overwhelming security teams whose processes and tech stacks have been left behind overnight.

At the same time, AI tools create new points of risk. Sensitive data can be embedded in prompts, processed by external systems, or redistributed in ways that are difficult to track without full endpoint visibility.

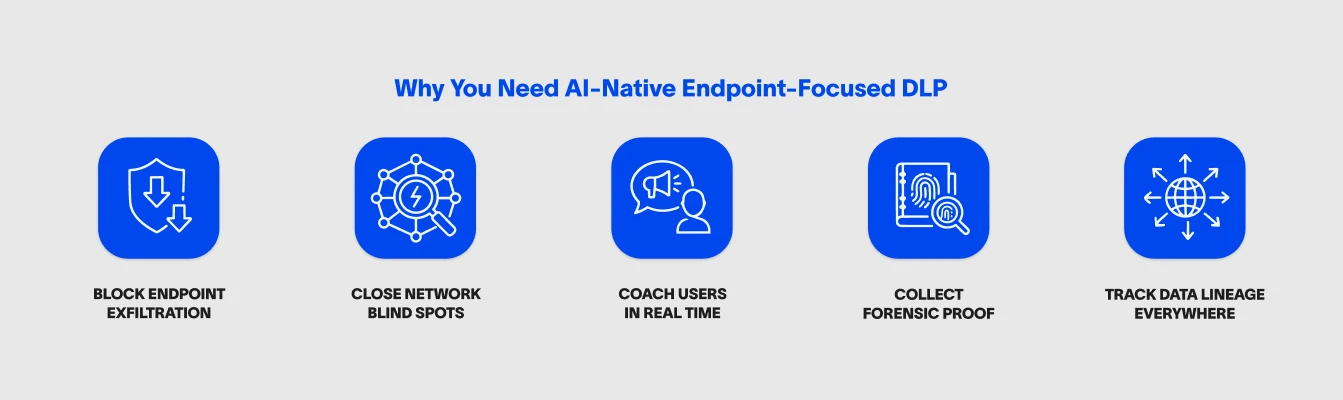

By operating at the endpoint, Cyberhaven can observe how both users and AI tools interact with data. Policies can be applied based on what the data is, where it came from, and how it is being used, rather than relying solely on application names or destinations. Cyberhaven doesn't need to rebuild or acquire technology to monitor AI on the endpoint, because the endpoint was always the foundation.

The same underlying visibility also supports automated detection and investigation. Cyberhaven's AI capabilities use lineage and endpoint telemetry to identify risks, triage incidents, and reduce the need for manual review. Cyberhaven simultaneously secures AI workflows while using the same technology to accelerate security operations.

In real environments, the pattern is consistent. The most significant risks tend to appear when data is actively being used, not when it is sitting in storage.

Organizations dealing with regulated data, intellectual property, or sensitive customer information often find that endpoint visibility fills a gap that other controls cannot address on their own, because the endpoint is more often than not where the action is.

It allows them to see how data is actually handled day to day and intervene when necessary without disrupting legitimate work.

Endpoint DLP as a Foundation, Not an Add-On

If you look at how data risk has evolved, a few things become clear.

- Understanding intent requires visibility into user and system actions at the endpoint.

- Tracking data accurately requires continuity as it moves and changes.

- Making controls usable requires careful attention to performance and user experience.

Cloud and network controls still play an important role. But on their own, they no longer provide a complete picture. Endpoint DLP has shifted from being an optional layer to a foundational component of a modern data security strategy.

The organizations that recognize this are not just improving visibility. They are aligning their security models with how work actually happens today.

Learn more about how data lineage and endpoint visibility create the core of Cyberhaven's technology and outcomes.

.avif)

.avif)