Cyberhaven for generative AI.

Cyberhaven secures the future of work by enabling visibility and control over sensitive data flowing to and from generative AI applications.

GenAI Risks

The challenges to secure

generative AI usage

Generative AI tools, like ChatGPT, promise productivity gains for employees of all kinds – but pose a new risk to confidential company information.

Identify shadow AI usage

IT teams need to know what employees are using so they can invest in the right tools, while securely enabling corporate versions for security and compliance.

Protect data going into AI

Ensure corporate data is only going into corporate AI tools that have robust data protection. Don't block personal AI tools, but block corporate data from going into them.

Track AI-generated material

Track how AI generated content is used and reduce the risk of copyrighted, patented, malicious, or inaccurate material making its way into company data.

Cyberhaven for AI

Cyberhaven gives you the visibility

needed to securely use AI

Understand shadow AI usage

Keep up with new AI tools as end-user adoption of risky “shadow AI” far outpaces corporate IT controls. Cyberhaven lets you see every AI tool used by your organization, who’s using them, and what kinds of data are interacting with them.

See all data flows to AI tools out of the box

Cyberhaven records all data flows to the internet without any configuration or policies needed, so your team can understand what sensitive data is flowing to and from these tools. Use these insights to partner with business leaders and develop company policies regarding the usage of AI applications.

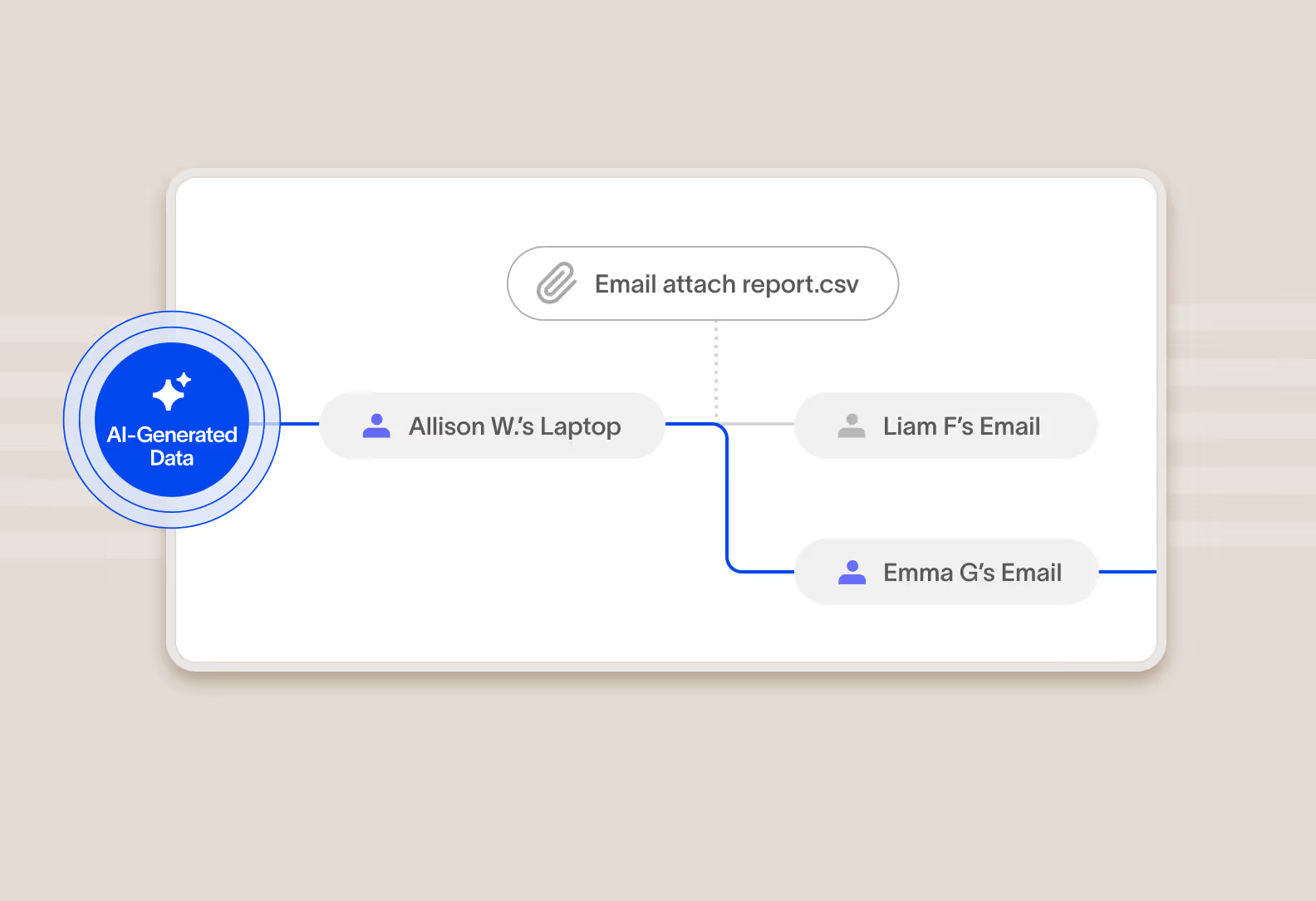

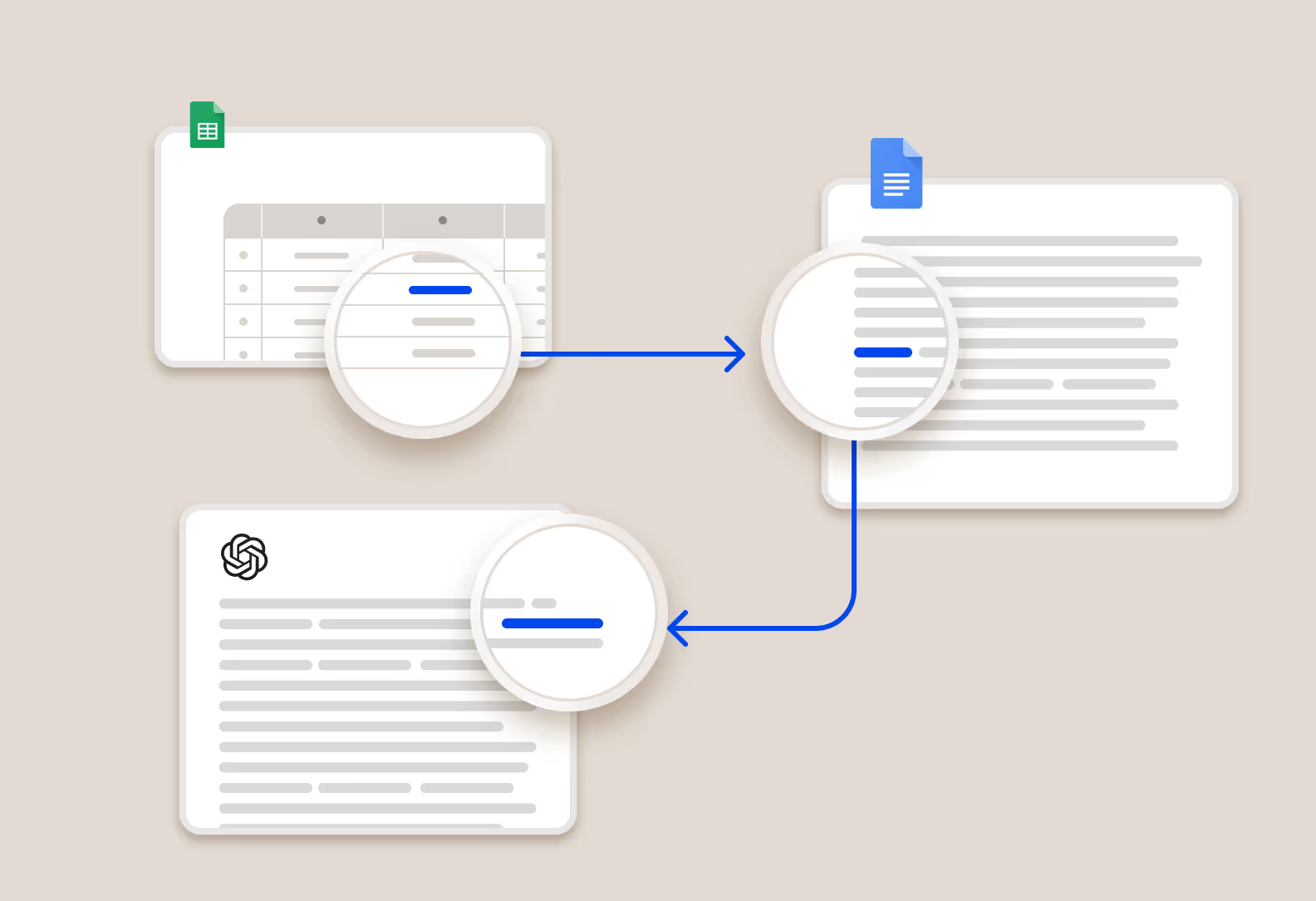

Identify AI-generated data

Cyberhaven traces data from its origins, including from generative AI tools, to the people and systems that use it. Protect against the accidental use of copyrighted material from AI, reduce the risk of decisions made on AI hallucinations, and guard against malicious code by identifying the source of data.

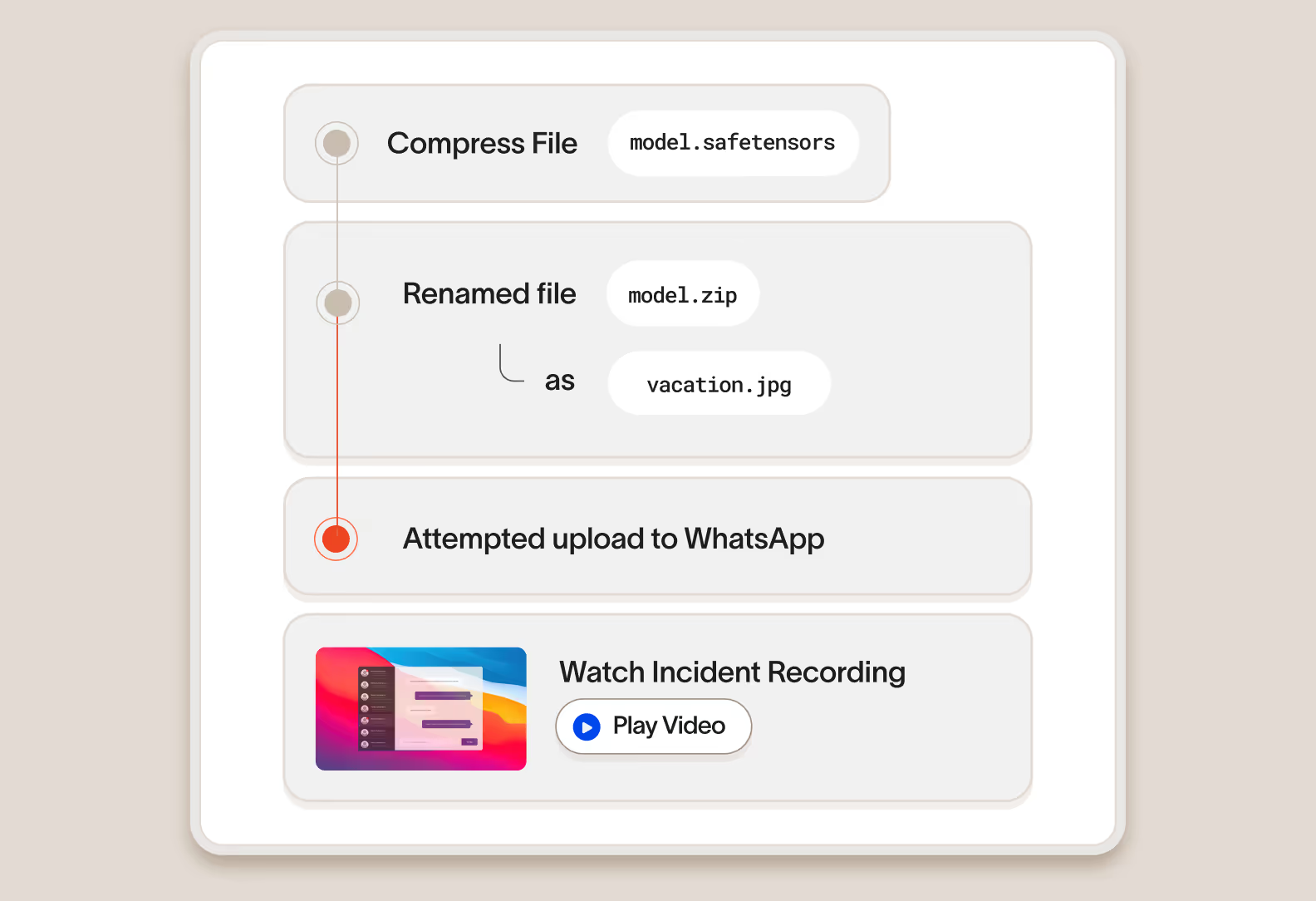

Secure the keys to AI innovation

For companies building AI solutions in-house or using third-party LLMs, Cyberhaven Data Detection and Response (DDR) identifies and protects sensitive information that other tools often miss. That includes source code, training data, model weights, and strategy documents that are critical to maintaining a competitive edge.

Control AI Data

Control the flow of data to and from AI

tools with simple, powerful policies

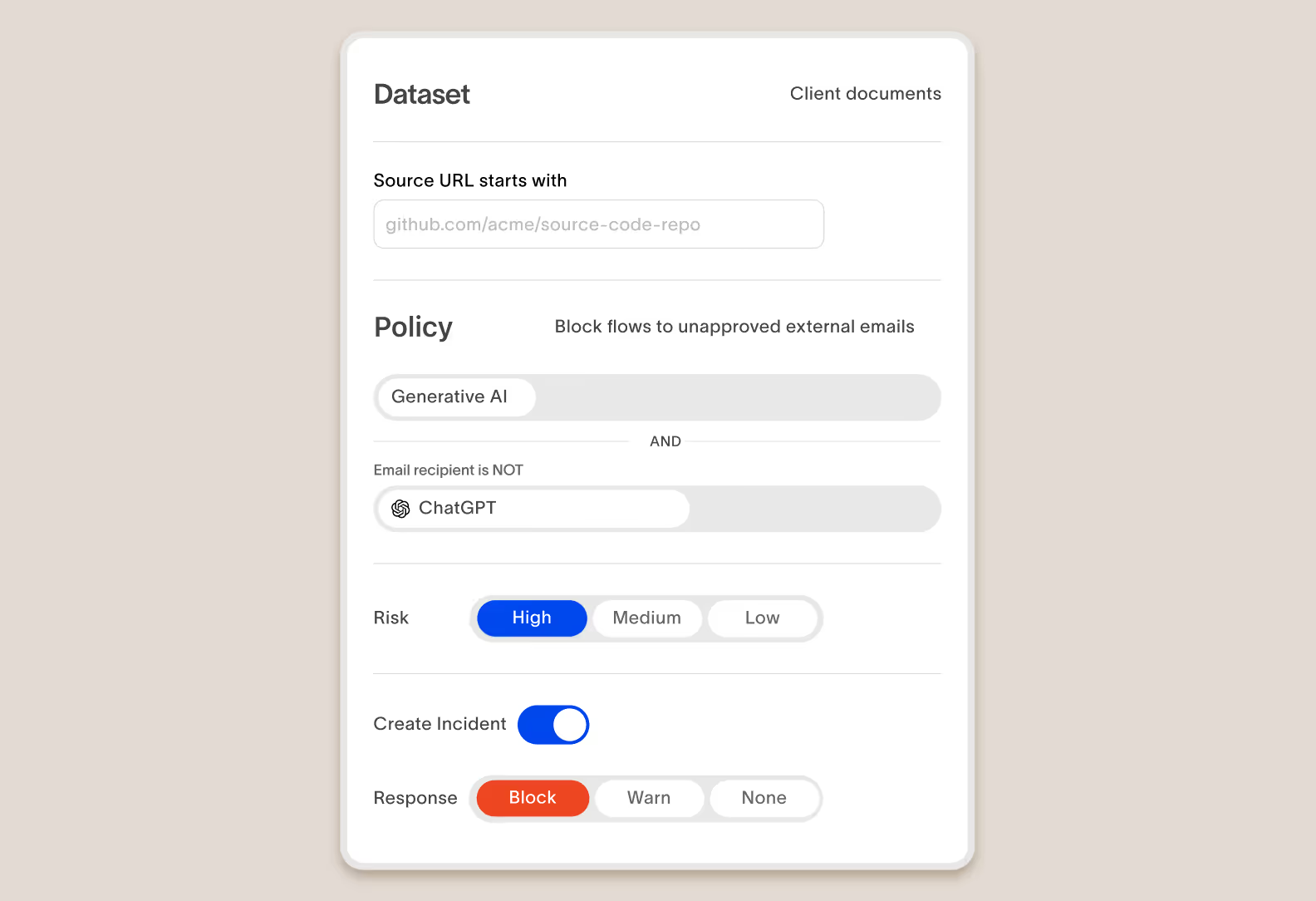

Cyberhaven makes it possible to define incredibly simple policies that prevent your sensitive data from flowing to dangerous AI tools.

Test policies on historical data to quickly preview and iterate

Cyberhaven maintains a complete record of every user action for every piece of data. When editing a policy, you can see how it would apply to historical data to quickly make any adjustments without deploying it in production and waiting for results and complaints.

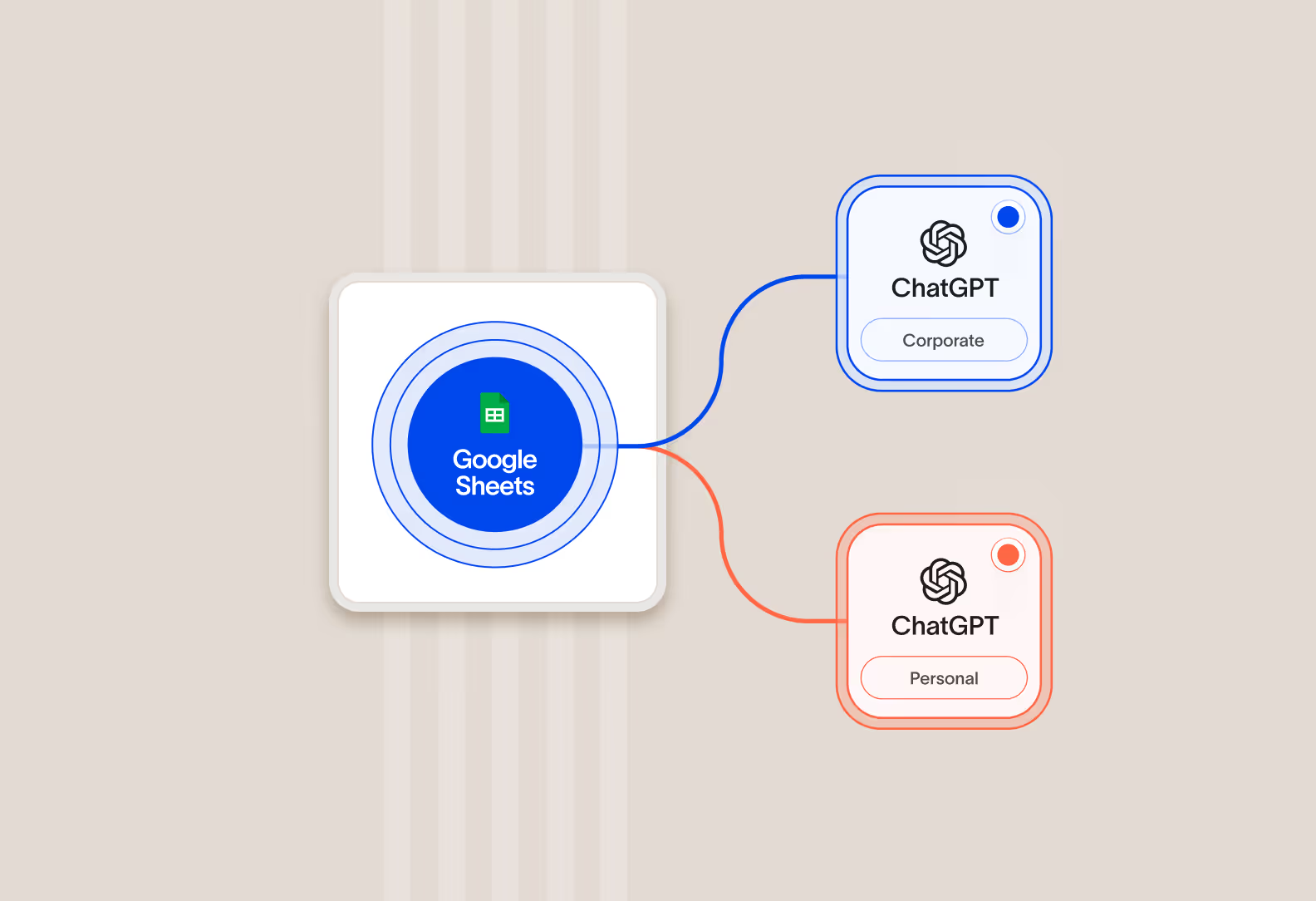

Differentiate between personal and corporate AI accounts

Cyberhaven provides granular visibility into the account being utilized with AI applications, enabling flows to corporate instances that have data privacy protections while blocking flows to personal instances that can lead to exposure.

Immediate Response

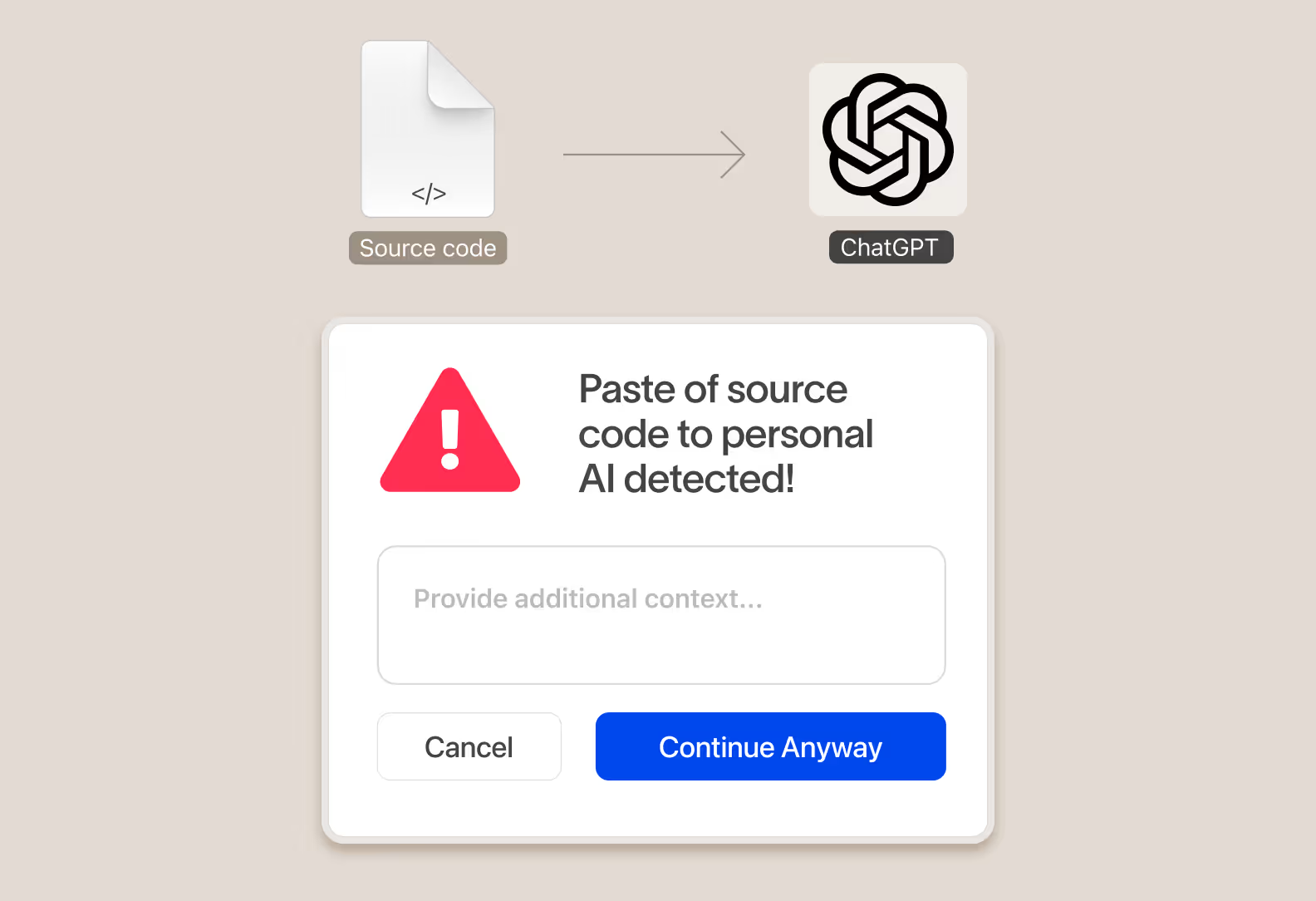

Take real-time action to protect data and educate users on the right behavior

When data is at risk of flowing to an unapproved AI application, instantly take action and surface a message to the user educating them on company policy and redirect them to approved alternatives. An educated employee base leads to 80% fewer incidents and reduced risk to data over time.

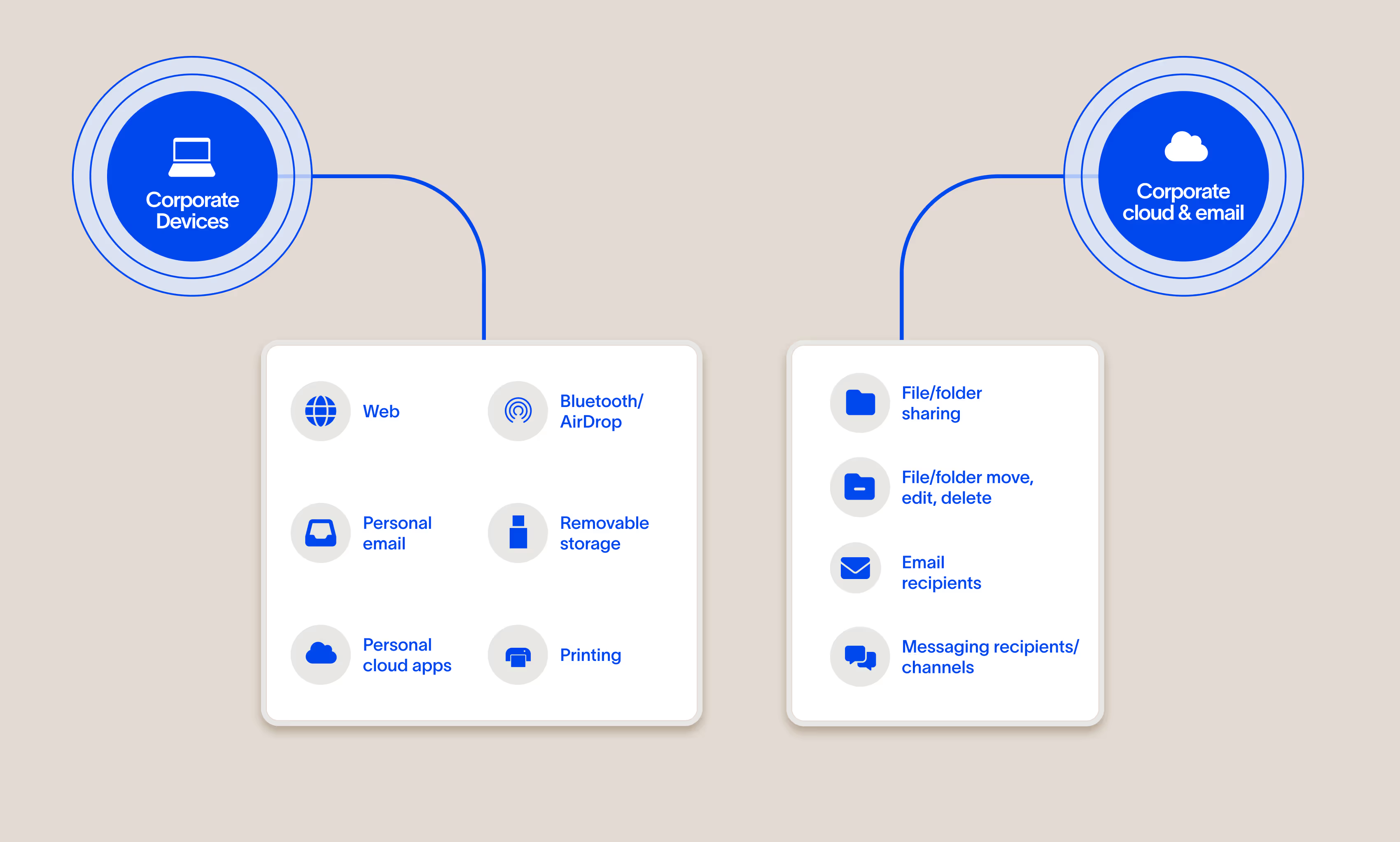

Complete coverage

One product to protect data across

every exfiltration channel

Cyberhaven Data Detection and Response (DDR) makes it possible to stop exfiltration across all channels with one product and one set of policies.

Unified visibility and enforcement

Cyberhaven AI & Data Security Platform

One unified solution for protecting data wherever it lives and goes.

DSPM

Discover and classify data, detect risk as it flows between clouds and devices, and secure it automatically with Data Security Posture Management.

DLP

Protect data and stop exfiltration: coach users and block leaks across email, web, cloud, and devices with reimagined Data Loss Prevention.

IRM

Combine data and behavior signals to stop insider threats, clarify intent, and catch slow-burning risks with Insider Risk Management.

AI security

Increase AI adoption securely, understand shadow AI usage, assess AI risk posture, and prevent leaks without blocking teams with AI Security.

.png)

.avif)

.avif)