AI Security for the Age of Autonomous Agents

Cyberhaven provides unprecedented visibility into AI usage and enforces risk-based controls, enabling AI adoption while keeping data safe.

AI security built for chat-only tools can’t govern agents

Why existing tools aren't enough

The way AI is used has fundamentally changed. Employees and developers are no longer just experimenting with chatbots. Autonomous agents are running on endpoints, accessing files, processing sensitive data, and continuously executing actions outside the visibility of most security programs.

AI keeps evolving

Endpoint-based agents, local models, MCP servers, and background processes increase complexity and risk while remaining largely invisible.

Data moves at machine speed

As AI continues to read, transform, and act on data, Legacy DLPs, built for human-speed actions, can't keep up.

Controls stop at the browser

Existing AI security approaches have no visibility into agents that operate at the OS level and bypass network controls entirely.

Discovery, observability, and control across every AI surface

Cyberhaven gives security teams real-time visibility into AI apps, agents, and data flows, across SaaS, endpoints, and developer environments. Policies are enforced based on behavior and data context, not assumptions.

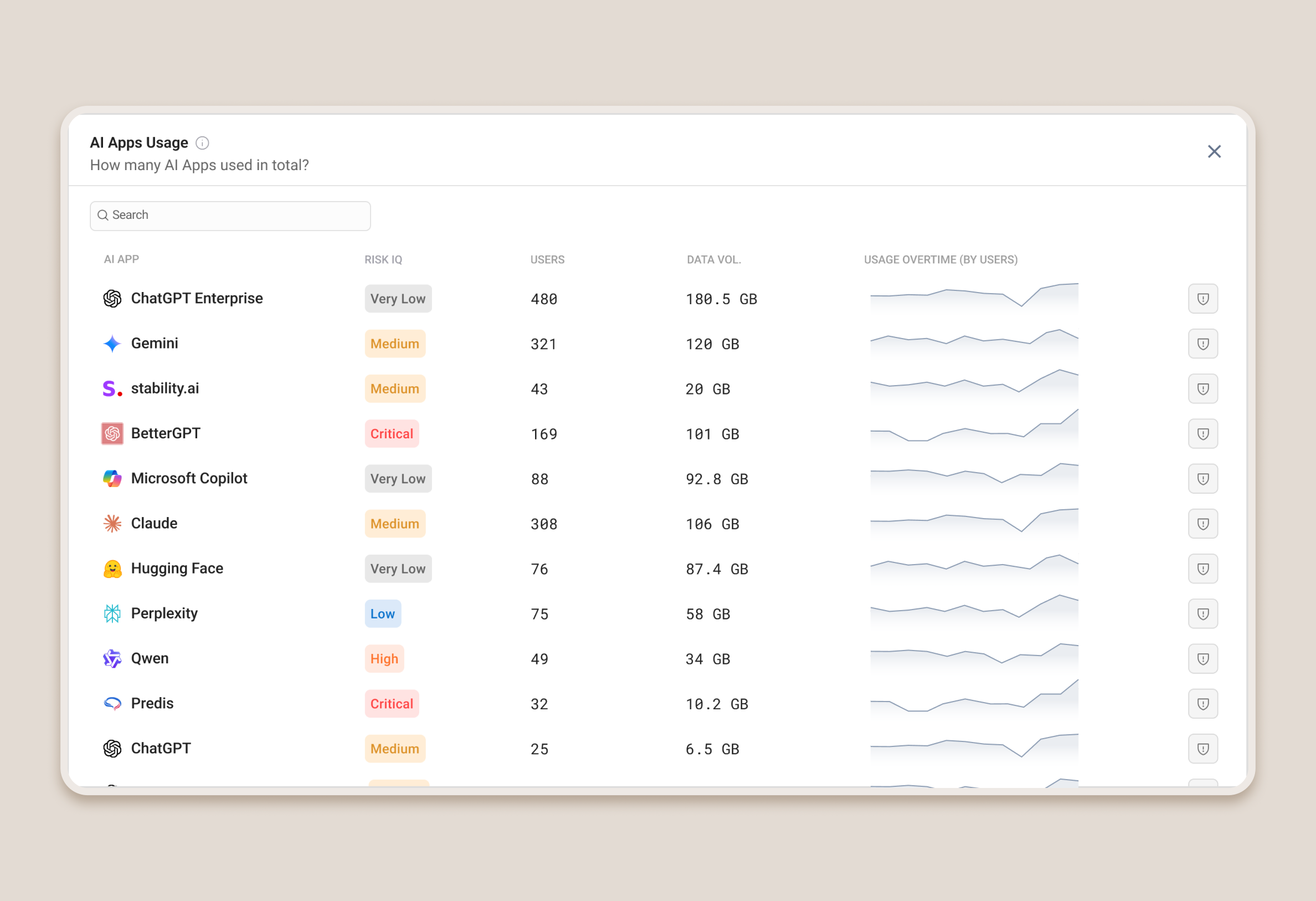

Discover every AI app and agent

Cyberhaven automatically inventories sanctioned and unsanctioned AI apps as they appear across the organization, from mainstream SaaS generative AI applications to endpoint coding assistants, open-source agent frameworks, and MCP servers. No manual cataloging required.

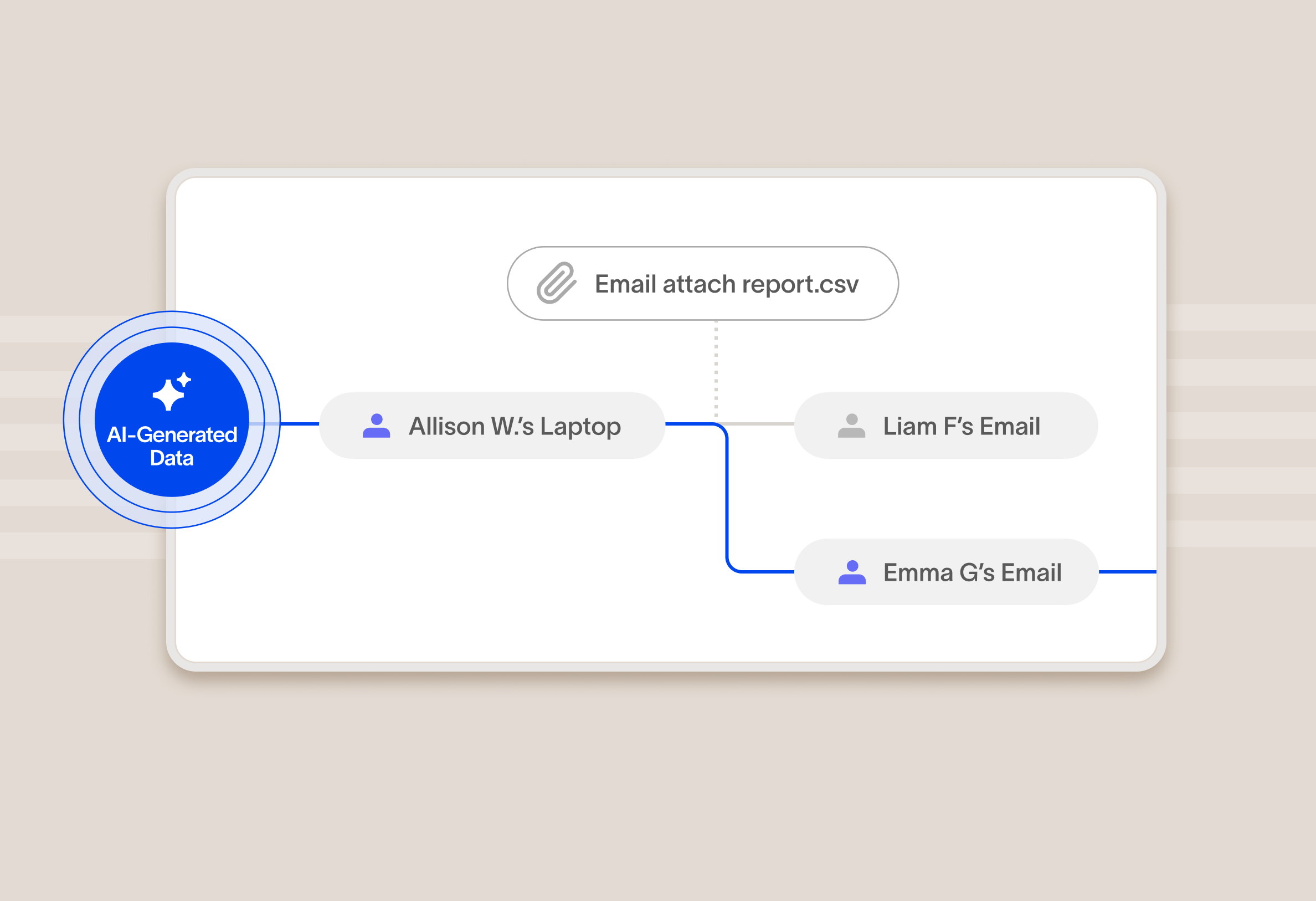

Observe what data agents ingest

Go beyond detecting which tools are present. Cyberhaven tracks what data each agent accesses, what actions it takes, and how sensitive data moves through its workflows, giving security teams the observability they need to assess real risk.

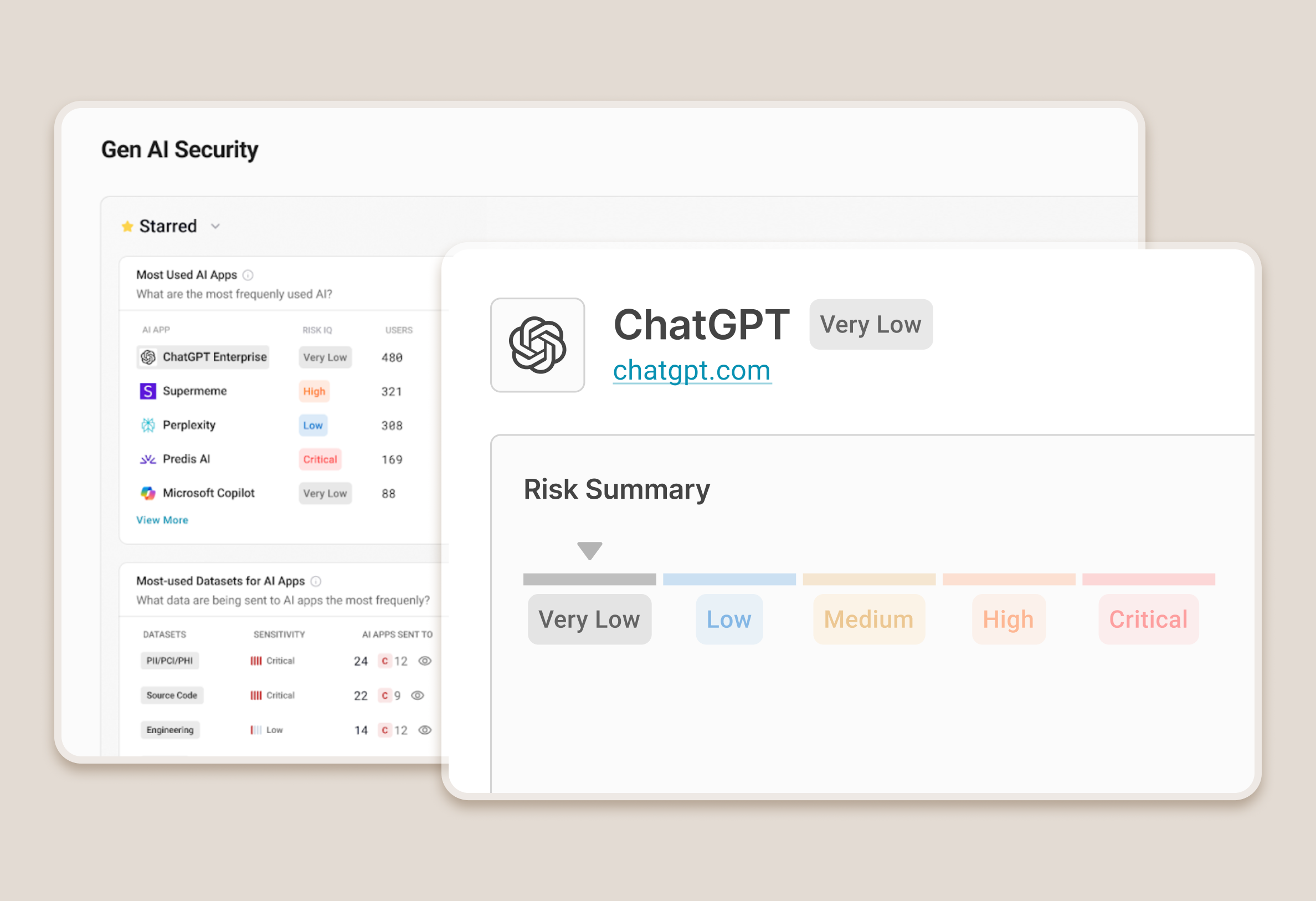

Assess risk with AI Risk IQ

Every AI tool and agent receives a risk score across five dimensions: data sensitivity, model integrity, compliance adherence, user access controls, and security infrastructure. This gives security teams a defensible, evidence-based risk posture.

Enforce controls on the data, not just the tool

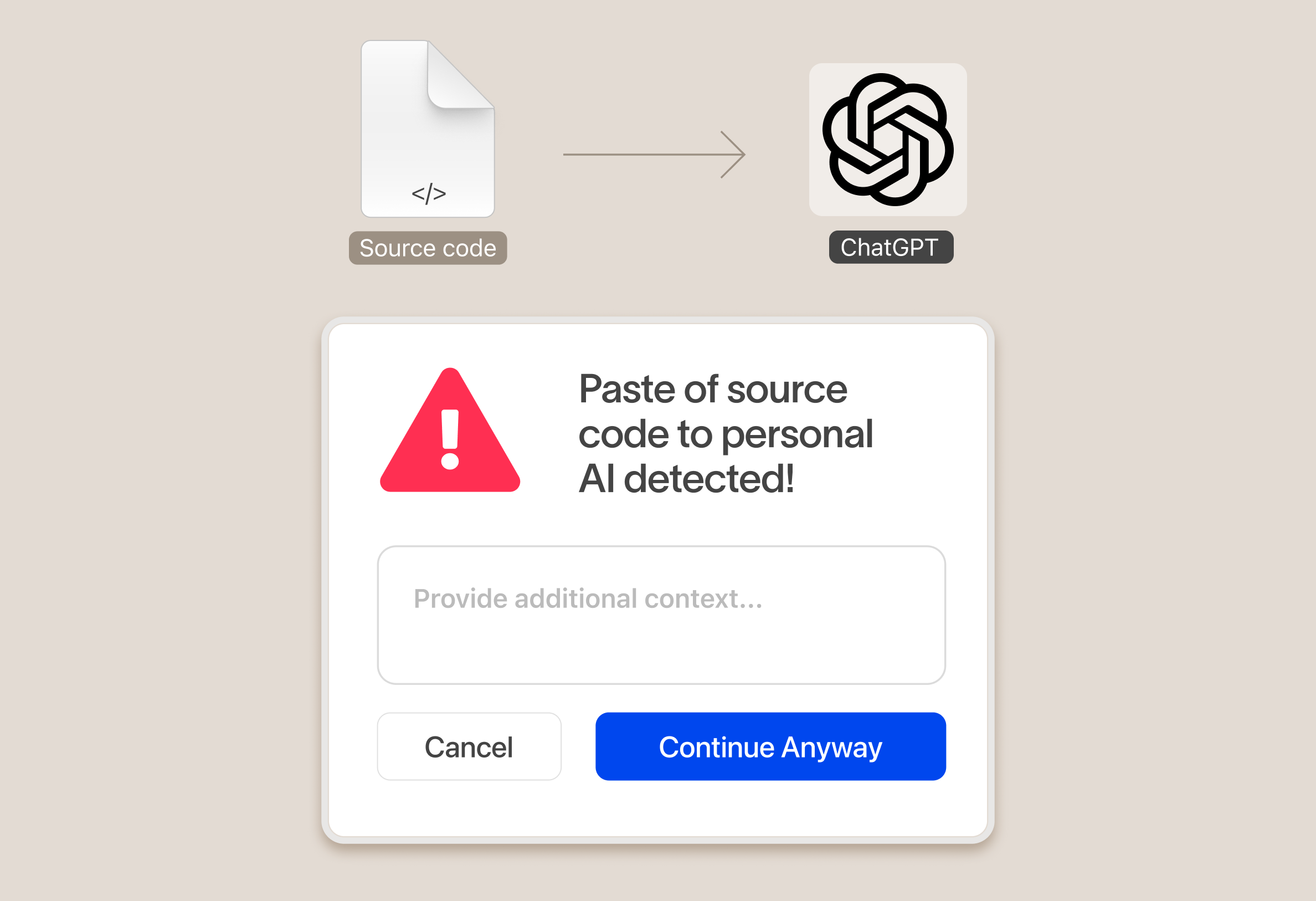

Cyberhaven applies risk-based policies at the data level, blocking or monitoring data flows based on context. When a developer pastes PII into an agent or an AI tool accesses a regulated file, Cyberhaven can act in real time, without disrupting legitimate work.

The AI security features security teams actually need

Shadow AI Discovery

Continuously inventories AI agents running across endpoints, browsers, CLIs, and IDEs, including tools that cloud-only security solutions cannot see.

Agentic AI Visibility

Reconstructs the full execution lifecycle of every agent interaction, capturing tool calls, data access, and multi-turn conversation context in a single view.

MCP Server Monitoring

Discovers and monitors Model Context Protocol servers and AI connectors across the enterprise, surfacing risk from integrations that operate outside traditional security controls.

AI Risk IQ Scoring

Assigns risk scores across five dimensions to every AI application and agent in your environment. Scores are maintained quarterly by Cyberhaven, with no customer configuration required.

AI Data Flow Control

Enforces runtime guardrails at the prompt and response level, blocking high-risk data movement, redirecting users to sanctioned tools, and coaching employees with plain-English policy explanations.

Data Lineage for AI Interactions

Connects every agent action to the data it touched, showing where that data originated and where it went next, so alerts become investigations.

Real-Time Enforcement

Stops unsafe or high-risk agent actions before they cause damage, with block, warn, and redact controls that operate across the full agentic workflow.

Usage and Adoption Insights

Surfaces AI adoption trends across the enterprise, categorizing applications as Sanctioned, Unsanctioned, Tolerated, or Restricted to support governance decisions.

Compliance Audit Readiness

Provides a comprehensive record of AI agent activity, data access, and policy enforcement actions to support audit workflows and regulatory reporting.

Unified Console and Reporting

Manages AI security policy, alerts, and reporting from the same console used for DLP and IRM, with no additional policy engine for existing customers to learn.

Built for the AI era, not retrofitted for AI

Legacy security tools were designed around networks, applications, and human-speed behavior. They can detect that an employee opened ChatGPT. They cannot trace what data an autonomous agent read, stored, transformed, and acted on across dozens of API calls.

Cyberhaven was built around data, not perimeters. At the core of the platform is a Data Lineage graph that tracks every piece of data across every workflow, regardless of the tool touching it. That context is what makes real-time, accurate enforcement possible at machine speed.

This is not a DLP engine with an AI detection layer added. It is a purpose-built platform for securing data in a world where AI agents operate as autonomous actors inside enterprise systems.

Unified visibility and enforcement

Cyberhaven AI & data security platform

One unified solution for protecting data wherever it lives and goes.

DSPM

Discover and classify data, detect risk as it flows between clouds and devices, and secure it automatically with Data Security Posture Management.

DLP

Protect data and stop exfiltration: coach users and block leaks across email, web, cloud, and devices with reimagined Data Loss Prevention.

IRM

Combine data and behavior signals to stop insider threats, clarify intent, and catch slow-burning risks with Insider Risk Management.

Frequently Asked Questions

What is AI security for enterprises?

AI security for enterprises is the practice of governing how AI tools, models, and autonomous agents access, process, and act on sensitive data across an organization. Unlike broader cybersecurity disciplines, enterprise AI security focuses specifically on the risks introduced when AI operates with direct access to business data and systems, including shadow tool usage, data exposure through generative or agentic AI, and autonomous agents executing workflows without direct human oversight.

How is AI security different from traditional DLP?

AI security for enterprises is the practice of governing how AI tools, models, and autonomous agents access, process, and act on sensitive data across an organization. Unlike broader cybersecurity disciplines, enterprise AI security focuses specifically on the risks introduced when AI operates with direct access to business data and systems, including shadow tool usage, data exposure through generative or agentic AI, and autonomous agents executing workflows without direct human oversight.

What are shadow AI agents and why do they matter?

Shadow AI agents are autonomous AI systems running on enterprise endpoints outside the visibility and approval of security teams. Shadow agents can take action such as reading local files, invoking external APIs, executing code, and moving data across systems. Because they operate at the OS level and often bypass browser and network controls, they represent a data exposure risk that most existing security programs cannot detect or govern.

How does Cyberhaven detect AI tools on endpoints?

Cyberhaven uses an endpoint agent to continuously inventory AI tools as they appear across the organization, including mainstream SaaS generative AI applications, coding assistants running in IDEs and CLIs, open-source agent frameworks, and Model Context Protocol (MCP) servers.

What is an AI risk score?

Cyberhaven's AI Risk IQ scores every AI application and agent across five dimensions: data sensitivity, model integrity, compliance adherence, user access controls, and security infrastructure. Scores are maintained quarterly by Cyberhaven's research team, with no configuration required from customers. This gives security teams an evidence-based risk posture they can act on and defend during audits.

How does Cyberhaven enforce policies on AI-generated data?

Cyberhaven enforces policies at the data level, not the tool level, using Data Lineage to trace where sensitive data originated and how it has moved. When an AI agent accesses a regulated file or a developer pastes PII into a coding assistant, Cyberhaven can block, warn, or redact in real time based on data context and the risk score of the tool involved. Enforcement operates across the full agentic workflow, including prompts, responses, and downstream data flows, without disrupting legitimate work.

Can Cyberhaven secure agentic AI like Claude Code or Copilot?

Yes. Cyberhaven's endpoint agent monitors agentic AI tools including coding assistants like Claude Code and Copilot, reconstructing the full execution lifecycle of each agent interaction: tool calls, data access, and multi-turn conversation context. Security teams can see what data each agent ingests, set risk-based policies on how sensitive data can be used within those workflows, and enforce controls in real time before data leaves the environment.

.avif)

.avif)