The next major security problem enterprises will face won’t originate in the cloud. It will emerge on endpoints, where agentic AI is already operating with autonomy, authority, and access to sensitive data.

Look at open-source AI agent OpenClaw (formally known as Moltbot and Clawdbot), which has seen rapid adoption and made quite the impact in recent weeks. OpenClaw doesn’t ask for permission. It installs directly on endpoints, spawns processes, reads clipboard data, and executes actions across applications, all while sitting outside many enterprises’ DLP purview and security team’s lines of sight. OpenClaw is just the latest in a series of examples of AI agents operating inside enterprise systems with access to, and the ability to handle sensitive data.

OpenClaw may appear like an extreme example, a bot to block and move on from. Perhaps so. But it’s a prime example of a landscape undeniably coming into focus: AI agents running on behalf of your employees, in tandem with your employees, and with all of the risks you assume with your employees (and then some.)

Other examples like Claude Code, Codex, and Cowork are operating similarly to OpenClaw, and there’s no way to block them all. In some ways AI agents are more capable, and in some ways they’re less capable, and yet less security is allocated to them.

Another new example, which has already garnered support of major organizations such as State Farm, Oracle, and Uber, is OpenAI Frontier, which bills itself “a new platform that helps enterprises build, deploy, and manage AI agents that can do real work.”

Both are part of a rapidly growing trend of AI moving from in-browser GenAI to agent-focused AI that lives and operates on individual endpoints, and Cyberhaven has seen this trend inching upward long before OpenClaw and Frontier captured the attention of coders and journalists alike. Cyberhaven Labs’ own research shows that roughly half of all developers (49.5%) were using desktop-based coding assistants by December 2025, up from about 20% at the start of 2025, and nearly 23% of enterprises have adopted agent-building platforms as of the start of 2026.

At the same time, data itself has become the defining source of competitive advantage. Models are increasingly interchangeable. What differentiates enterprises is the data they collect, the context they build around it, and the workflows that give it meaning. As AI systems gain autonomy at the endpoint, that data is no longer just valuable. It is exposed in ways most security programs were never designed to handle.

Agents like OpenClaw and Frontier are the logical next step in how AI is being deployed, and the way it operates has spurred serious data security concerns. The fact is that the center of gravity has moved, and security strategies haven’t caught up yet.

Open-Source Endpoint Agents Create New Data Risks

OpenClaw’s architecture exposes the real problem: it maintains a persistent context window stored locally, essentially a searchable index of every interaction, every data fragment, every credential it’s touched. Unlike browser-based tools where you can at least intercept HTTPS traffic or enforce SSO, these endpoint agents operate at the OS level, using accessibility APIs and clipboard monitoring that bypass traditional network controls entirely.

In practice, this means data is no longer moving through clearly defined business workflows that security teams can model in advance. It is being pulled, transformed, and reused by agents that operate continuously, without the natural boundaries legacy controls depend on.

Our data shows 39.7% of AI interactions involve sensitive data. Until recently, this has been almost exclusively by human employees who have at least some security training and operate under implicit cultural norms about what is and is not sensitive, and how it should (and should not) be handled. Now multiply that by agents that run continuously in the background, have full filesystem access, store interaction history indefinitely (they never forget or get distracted), and often sync to developer-controlled infrastructure you don’t govern.

AI is here. Organizations are embracing AI (62% of enterprises are experimenting with AI agents) and individual users are following suit (the highest rates of AI adoption are utilizing over 300 GenAI tools within their enterprise environment).

So, what are security teams to do?

You Can’t Just Block AI

In practice, enterprises have three main options available to them.

Option 1: Block AI Usage. The pattern is consistent for organizations trying to secure these new tools: block AI at the network level, and users route around it. Local LLMs like Ollama require no internet connection. API proxies take five minutes to set up. Personal devices are always an option.

Blocking doesn’t stop adoption, it just makes it invisible. The tighter your grip, the more slips through your fingers.

Option 2: Block Some AI, Create Rules Around Others.

Every rule has a thousand exceptions, and with new tools and agents appearing seemingly out of thin air every week, how do you know what to block or what agents and tools are even in use? It’s a Whack-a-Mole nightmare. You approve GitHub Copilot, so developers switch to Cursor. You approve Cursor, they install Continue.dev. Each new tool requires procurement review, security assessment, and integration testing, meanwhile, your software development rate drops and shadow AI usage increases. By the time your organization has assessed OpenClaw, three new agent frameworks have hit the market.

This option creates an immense burden on security teams who may not – depending on their tech stack and integrations – have visibility into what AI agents are in use on endpoints. Security teams will spend their time arguing about which tools to block while sensitive data is already long gone.

Option 3: Focus On The Data.

Security teams are looking in the wrong places when it comes to security in the age of AI. You can’t solely secure the systems, because legacy tech can’t adequately see or protect these emerging tools. People will always be a variable, and trying to create security fully focused around their behavior will never succeed.

But you can protect the data.

The goal of security has never been to stop work from happening. It is to allow data to move freely inside legitimate business workflows, while preventing it from escaping those boundaries. The challenge is that those workflows are complex, constantly changing, and rarely documented in a way security teams can translate into rules.

The answer is a deep understanding of the data, applied at machine speed. Traditional data loss prevention (DLP) asks: ‘What applications are touching this file?’ But, the right question is: ‘What data relationships exist, how are they changing, and what autonomous actors are participating in those changes?’

This requires rethinking telemetry. You need to instrument data flows, not just network packets. You need policy enforcement that understands context: Is this a developer testing code with synthetic data, or is production PII being used to fine-tune an unapproved model?

Understanding data at this level requires modeling how events relate to one another over time, not treating each action as an isolated signal. To truly understand these flows, you need more than raw telemetry. You need a context graph that stitches together every data event, every user action, and every decision an agent makes into a coherent, queryable structure. By capturing those relationships over time, teams can trace not just paths but the causal context around why and how data was accessed, modified, or moved.

Legacy Security Companies Were Built For The Wrong Battle

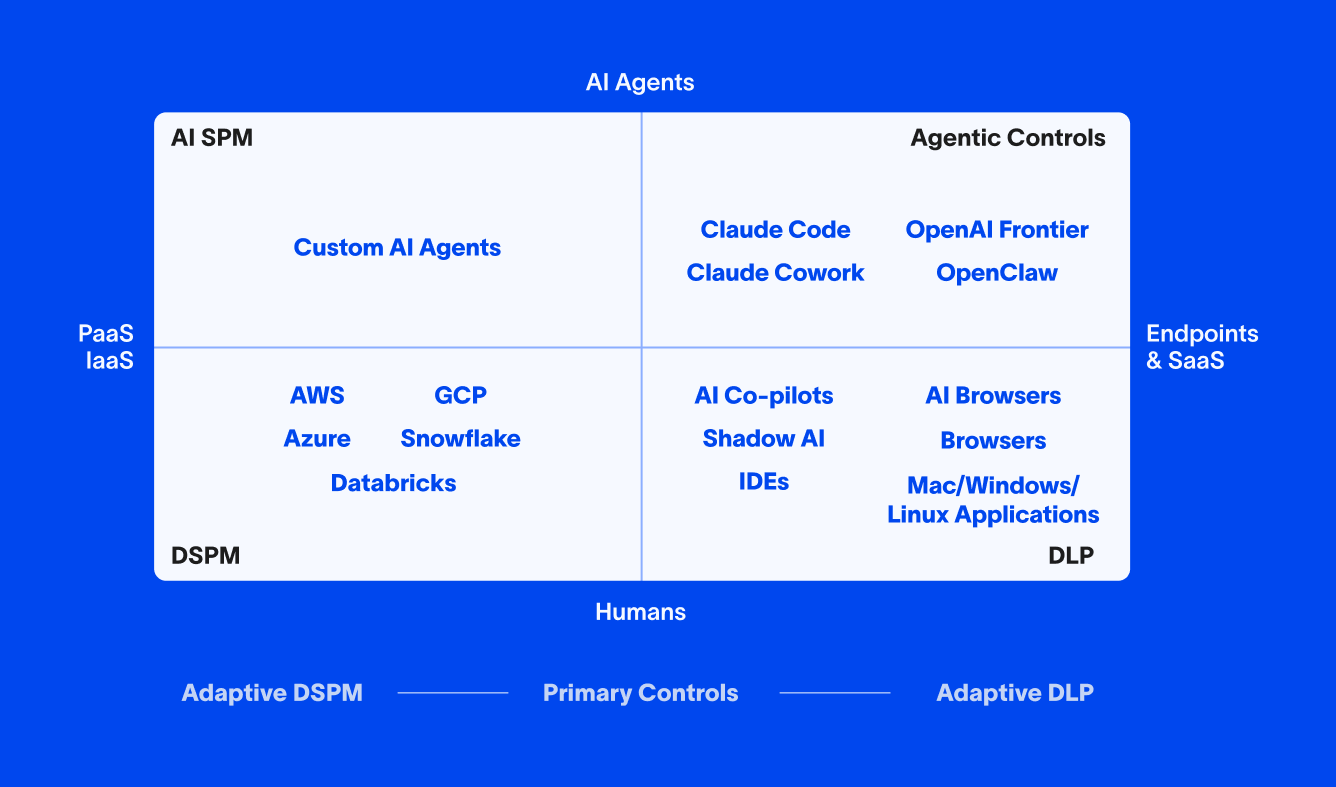

What these AI agents are showing us is that risk, data, and behavior all converge on the endpoint, so if you focus visibility, data tracing, monitoring, and enforcement there, a light will begin to shine. However, for most organizations, that light will be dim at best. Legacy security companies have focused on cloud and network perimeters primarily and preventing outsiders gaining access. The balance has shifted from outside-in risk to inside-out risks.

That’s because endpoint detection and response (EDR), legacy DLP, and data security posture management (DSPM) weren’t designed for a world where autonomous agents operate continuously on endpoints, outside your already decayed network perimeter, using APIs your security stack has never heard of. Each tool may be able to gain some visibility, but none can see the whole picture of how data moves and what it means to an organization. The architectural mismatch is fundamental.

Pre-AI security tools assume:

- Data moves through networks you control

- Humans are the actors (with human-speed patterns)

- Applications are known, managed, and relatively static

- Risk events have clear signatures you can detect

But, agentic AI breaks every assumption.

They do not model the relationships between events in time. A context graph goes beyond isolated signals by connecting data actions, artifact histories, user intent, and system events into a unified structure. That context is what gives real lineage its power for threat detection, risk scoring, and incident investigation.

Ask yourself:

- Can you enumerate which AI agents are running on endpoints right now?

- Can you trace data lineage when an AI agent reads a file, stores it in embeddings, and potentially exfiltrates fragments across multiple API calls?

- Can you differentiate between a developer using an AI agent with synthetic test data versus production PII, and enforce policies accordingly?

The answers probably aren’t what you want them to be, and that’s the reality among the majority of enterprises still reliant on legacy security solutions. They are solving yesterday’s problems admirably. But agentic AI isn’t yesterday’s problem. It’s a different control plane entirely.

While legacy vendors scramble to bolt on “AI features” to tools built for network-centric, human-speed workflows, the risk compounds. Shadow AI proliferates. Sensitive data flows into agent memory stores outside your governance. And security teams are left trying to govern AI adoption with tools that fundamentally cannot see what’s happening at the endpoint.

Cyberhaven Was Built for This Moment

While legacy vendors scramble to retrofit AI capabilities onto architectures designed for firewalls and malware detection, Cyberhaven has been solving this problem since day one — because we never believed in their approach in the first place.

The legacy approach is fundamentally siloed:

- Systems-focused tools protect the cloud and the device

- People-focused tools monitor user behavior and knowledge flows

Both assume human speed. Both assume you control the entry and exit point. Both are blind to what’s actually happening.

AI agents shatter this model entirely. They affect systems and people and data. They operate in the spaces between security controls. The siloed approach, stitching together point solutions, each with a fragment of visibility, was already fragile. Agentic AI broke it completely.

Cyberhaven isn’t another security tool. It’s an architectural response to a changed reality.

We built a unified data model that treats data at rest and data in motion as part of the same system, because the boundary between them no longer matters. What matters is whether data stays within the workflows where it belongs.

We don’t start with applications or network traffic. We start with data, tracing it at the fragment level, across every workflow, in real time. At the core of that capability is a context graph that captures the full lineage of every piece of data across cloud, endpoints, applications, and users. That graph is not a static snapshot. It is a real-time model of how data relates to other data and actions, and it fuels classification, risk assessment, detection, and response with a level of precision legacy tools cannot approach.

With automated investigations, policyless detection, contextual analysis, semantic understanding, and a complete data history, our agentic AI is able to understand every piece of data within your organization, identify when it’s at risk, and take action to protect it.

Here’s what that means in practice:

We see what’s invisible to everyone else. Cyberhaven continuously monitors AI tools at the endpoint, not just the sanctioned SaaS platforms, but the whole ecosystem: local agents, background processes, API calls, clipboard events, and more. We track employee usage patterns and data flows as they happen, building a real-time map of which AI tools are active, who’s using them, and what data they’re touching.

We assess risk, not just detect activity. Every AI tool gets a detailed risk profile based on actual behavior: What data has it accessed? How is that data being used? Does it align with your policies and acceptable risk thresholds? This is a continuous risk assessment that adapts as tools evolve and usage patterns change.

We enforce controls where it matters: on the data itself. By controlling data flowing to and from AI tools, Cyberhaven enforces risk-based policies on data egress and ingress. When a developer pastes a database connection string into an AI agent, we see it. When an AI tool accesses customer PII, we can stop it (or allow it with monitoring) based on context. We make these decisions in milliseconds, without breaking workflow.

We enable AI adoption, not just police it. Our platform shows you your most active AI users, adoption patterns by team and tool, and exactly where sensitive data is flowing. Use these insights to educate employees, set intelligent guardrails, and drive productive, organization-wide AI adoption. The goal is to make AI usage safe, visible, and aligned with business objectives.

This approach works because it’s fundamentally different. We’re not retrofitting AI detection onto a DLP engine built for email attachments. We’re not bolting agent monitoring onto an EDR platform designed for malware signatures. We built data-centric security from the ground up, and that architecture is the only thing that works when autonomous agents operate at machine speed, outside traditional perimeters, with access to your most sensitive data.

The reality: every enterprise will adopt AI agents. The only question is whether you’ll have visibility, control, and governance when they do, or whether you’ll be flying blind, discovering risks only after sensitive data has already left your environment.

As AI agents become a permanent part of how work gets done, data protection will stop being optional. The organizations that succeed will be the ones that made it usable, visible, and aligned with how their business actually operates.

Cyberhaven has been protecting data in the age of AI since before AI agents dominated the headlines because we understood from day one that protecting data means following it everywhere it goes, at the speed it moves, regardless of the tool touching it.

.avif)

.avif)