Security teams often focus governance efforts on the most popular AI tools. But the real risk question isn't which tools employees use most. It's which tools are growing fastest and what data those tools can reach.

New data from Cyberhaven Labs shows that the AI categories posting the largest year-over-year growth numbers are the same categories with privileged access to source code, credentials, customer contracts, and internal architecture. Rapid adoption plus high data sensitivity is where the governance gap creates the most exposure.

What the Data Actually Shows About AI Adoption Risk

AI adoption risk is the gap between how fast employees adopt AI tools and how fast security programs can govern what those tools access. It isn't a function of any single tool's popularity. It's a function of adoption velocity multiplied by data sensitivity.

The Cyberhaven Labs data based on hundreds of thousands of employees makes that gap concrete.

Total enterprise use of endpoint-based AI native apps, including Claude, ChatGPT, and Copilot desktop, grew 509% in a single year, from February 2025 to February 2026. That's a fivefold expansion of the AI attack surface in 12 months. Most security programs did not scale at anything close to that rate.

The more important finding isn't the aggregate number. It's where the growth is concentrated.

Coding Assistants: The Highest-Risk Category, Growing the Fastest

Coding assistants are AI applications that access, generate, and modify vital enterprise code in real time, often with direct integration into a developer's local environment, version control system, and internal repositories.

Enterprise adoption of coding assistants grew 357% year over year. That growth rate makes coding assistants the fastest-expanding AI category in the dataset and also the category where a data governance gap carries the highest potential blast radius.

The reason is access. Coding assistants don't just process text prompts. They read files, traverse directory structures, and in agentic configurations, they execute commands. The data they can reach includes source code containing proprietary logic and product IP, credentials and API keys embedded in configuration files or environment variables, internal architecture documentation referenced in comments or linked files, and build and deployment scripts that describe production infrastructure.

When a developer pastes a code block into a coding assistant, or when an agentic coding tool browses a local repo autonomously, the data exposure isn't hypothetical. It's immediate. Security teams that haven't built specific detection and policy coverage for coding assistant activity are operating with a significant blind spot, one that is growing by hundreds of users per quarter.

Model Diversity Is Widening the Visibility Gap

The second major finding in the Cyberhaven Labs data is that employees aren't converging on a single AI tool. They're using whichever model fits their workflow, regardless of what IT has sanctioned.

Claude, for example, saw a 5,680% year-over-year increase. That's not an anomaly. It reflects a pattern playing out across the AI market: as new models prove useful for specific tasks, adoption follows quickly, often ahead of any formal approval process.

For security practitioners, this creates a concrete operational problem. A monitoring strategy built around ChatGPT misses Claude activity entirely. A Copilot-focused policy doesn't account for what happens when an engineer on a Mac opens a Claude desktop app to debug production code. Each unmonitored tool is a gap in the data you need to detect exfiltration, enforce policy, and investigate incidents.

Visibility across all models, not just the most popular one. is now a baseline requirement for any AI security program, not an advanced capability.

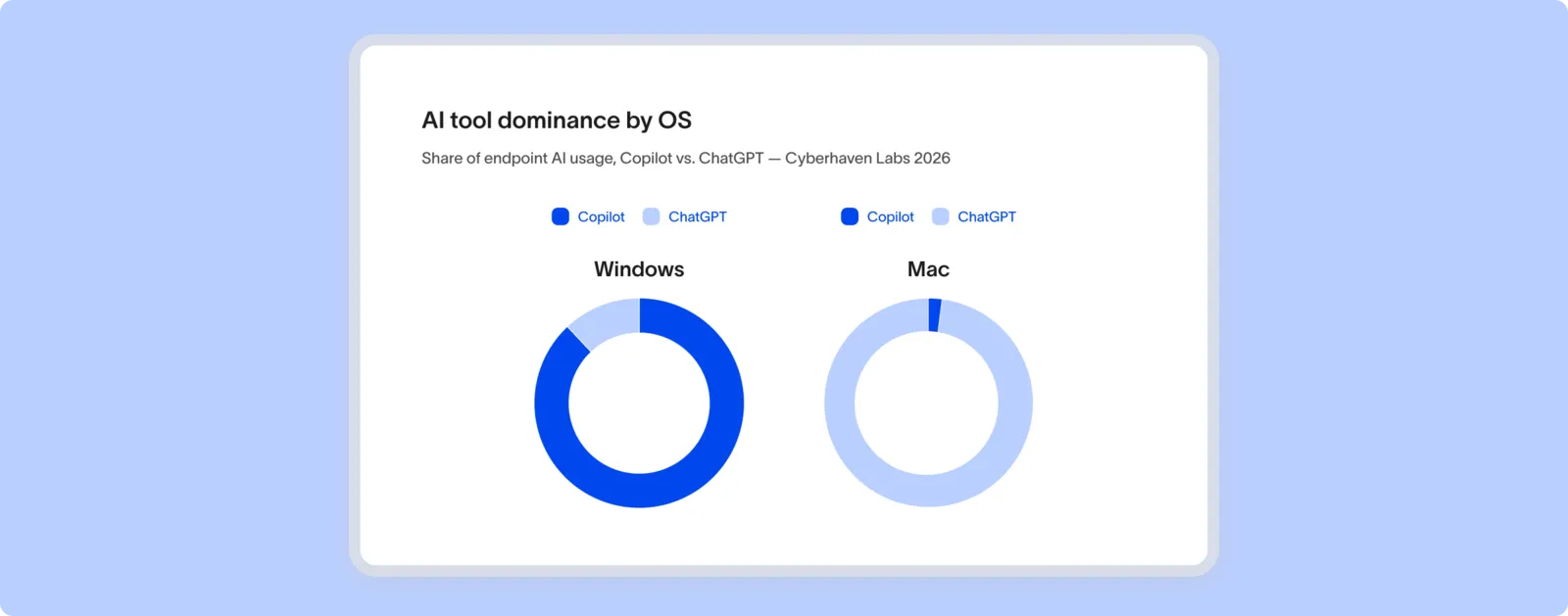

OS Fragmentation Makes a Single-Vendor Approach Insufficient

The Cyberhaven Labs data also reveals a fragmentation pattern that affects how security teams should think about AI governance architecture.

Microsoft Copilot commands 54% of endpoint AI usage overall in 2026. But that number hides a sharp platform split. On Mac, ChatGPT is 40 times more popular than Copilot. On Windows, Copilot is 7.6 times more popular than ChatGPT.

Most enterprises run mixed fleets. That means the AI tools in use, the data access patterns those tools create, and the risk profiles they generate differ significantly depending on which OS a given employee is running. A SaaS-only AI governance approach, or one built entirely around Microsoft's native tooling, misses the majority of endpoint AI activity on Mac.

If your detection and policy coverage is Copilot-centric, you have full visibility into roughly one platform segment of your fleet and partial visibility into the rest.

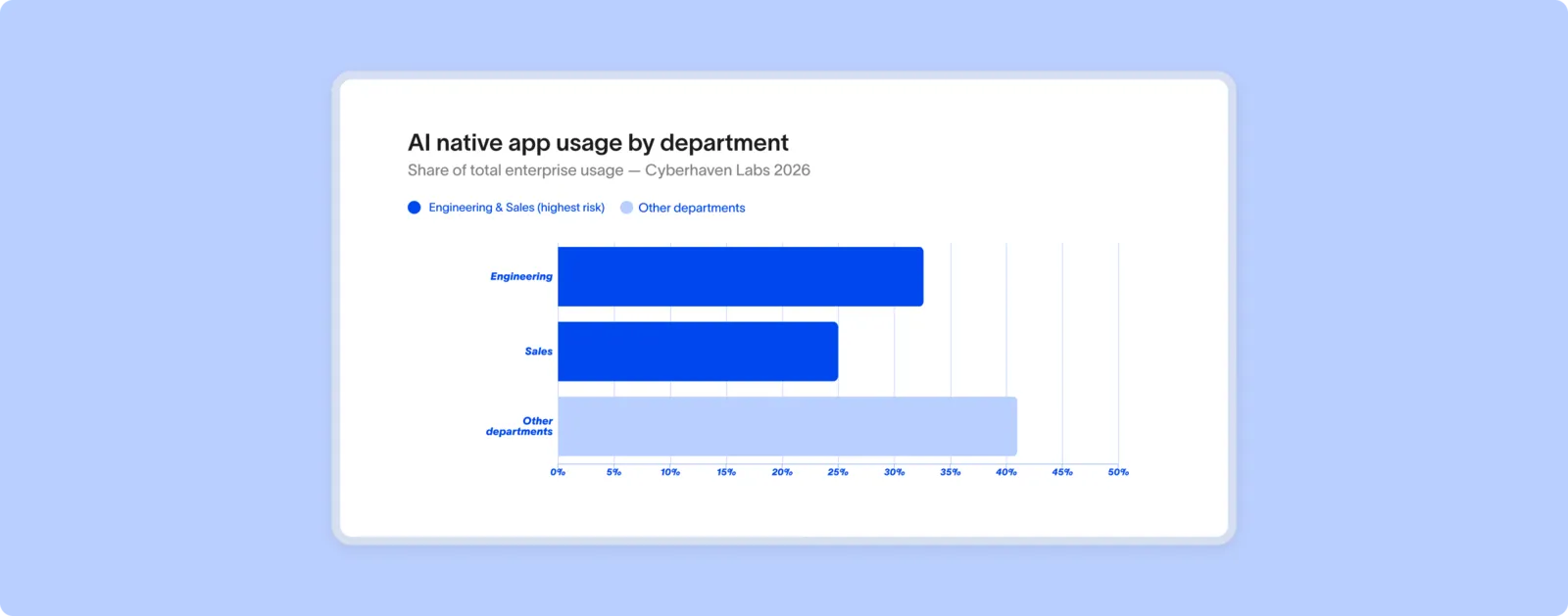

Where AI Usage Concentrates by Department

The departments driving the highest AI adoption are also the departments where data exfiltration or accidental leakage would be most damaging.

Engineering accounts for 33% of all AI native app usage across the enterprises in the Cyberhaven dataset. Sales accounts for 25%. Together, those two functions drive nearly 60% of total usage. Engineering holds source code, infrastructure credentials, and product IP. Sales handles customer data, contracts, pricing, and deal information.

Security programs that treat AI governance as a uniform, policy-wide problem miss this concentration. A DLP analyst building detection logic needs to understand that the highest-volume AI activity is happening in the functions with the most sensitive data, and that coding assistants, the fastest-growing category in that dataset, are disproportionately an engineering tool.

That's not an argument for blocking AI in engineering or sales. It's an argument for calibrating your detection and response posture to where the actual risk sits.

See how AI adoption and usage varies not just across departments, but industries, with our most recent data for financial services, manufacturing, and professional services.

What This Means for Security Practitioners

The Cyberhaven Labs 2026 data points to three operational gaps that security practitioners should assess against their current programs.

- Coverage across tools, not just the most popular one: If your AI monitoring is scoped to a single vendor or a single model, you don't have a complete picture. Claude's 5,680% growth is one example of how fast that picture can change.

- Coding assistant-specific detection: General AI policy doesn't account for the specific data access patterns that coding assistants create. Source code, credentials, and API keys require targeted detection rules, not just broad AI-use policies.

- Endpoint visibility across OS: If your AI governance architecture relies on cloud or SaaS-layer monitoring, you're missing native app activity on endpoints and the fragmentation between Mac and Windows AI usage patterns that the Cyberhaven data documents.

Better understand how your enterprise can secure AI usage with Governing the Autonomous Enterprise: A Security Framework for Agentic AI.

Frequently Asked Questions

Why are coding assistants considered the highest-risk AI category?

Coding assistants have direct access to source code, credentials, API keys, and internal architecture, or data types that carry significant IP and security value. Unlike general-purpose chat tools, coding assistants often operate with file system access and, in agentic configurations, can execute commands autonomously. That combination of privilege and autonomy makes them the category where ungoverned access carries the most potential for harm.

What does "endpoint AI adoption" mean, and why does it matter for security?

Endpoint AI adoption refers to employees using AI native apps, desktop applications like Claude, ChatGPT, or Copilot, directly on their devices, rather than through a browser or SaaS interface. Endpoint apps can access local files, environment variables, and system resources that cloud-based tools cannot. Security teams that monitor only SaaS-layer activity miss this entire class of AI usage.

How do I get visibility into which AI tools employees are using?

Effective visibility requires monitoring at the endpoint level, across all AI tools and models, not just the ones IT has approved. Data lineage tracking that follows data from source to destination, including AI tools, provides the coverage needed to detect exfiltration and enforce policy across a mixed AI footprint.

Why does OS fragmentation affect AI security strategy?

Because different AI tools dominate on different platforms. ChatGPT is far more prevalent on Mac; Copilot dominates on Windows. Enterprises with mixed fleets have different AI risk profiles across their endpoint population, which means a single-vendor governance approach leaves significant gaps.

How fast is enterprise AI adoption actually growing?

According to Cyberhaven Labs data, total endpoint AI adoption grew 509% year over year between February 2025 and February 2026. Coding assistants grew 357% in the same period. Claude grew 5,680%. These are not gradual shifts, they represent a rapid expansion of the AI attack surface that most governance programs have not matched.

.avif)

.avif)

.avif)