Understanding insider risk and insider threat is critical for security leaders. While both involve trusted individuals within your organization, the distinction between them shapes how you detect, respond to, and prevent internal security incidents. Insider risk involves potential harm from unintentional or risky behaviors, while insider threat refers to deliberate, malicious actions.

What is Insider Risk?

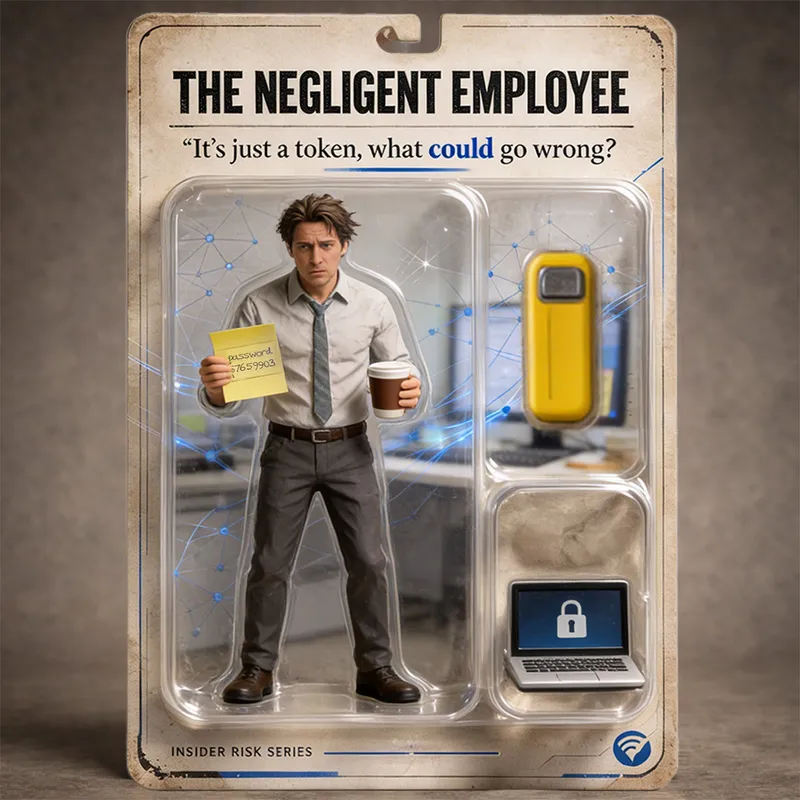

Insider risk is the potential for an authorized user to expose your organization to harm, whether through negligence, poor judgment, or lack of awareness. The defining characteristic of insider risk is that it does not require malicious intent. An employee sending sensitive data to a personal email account to finish work at home creates insider risk. A contractor using outdated software that introduces malware creates insider risk. These actions occur during normal workflows by users with legitimate access.

Insider risk encompasses a broad spectrum of behaviors:

- Data mishandling and unauthorized sharing

- Compliance violations, such as improper data classification

- Shadow IT usage without security oversight

- Weak password practices and credential sharing

- Accidental exposure through misconfigured cloud permissions

The challenge with insider risk is that traditional perimeter defenses cannot stop it. The user already has access. Security teams must instead focus on understanding behavior, context, and intent to identify when legitimate actions introduce vulnerabilities.

Organizations that treat all internal activity as potentially malicious create friction and erode trust. Those that ignore insider risk entirely leave themselves exposed. The solution lies in balancing visibility with empathy, recognizing that most employees want to do their jobs well and securely.

What are Insider Threats?

Insider threat refers to deliberate, malicious actions taken by someone within your organization to cause harm. Unlike insider risk, insider threat is defined by intent. The actor knows what they are doing and seeks to benefit themselves at the organization's expense. This could mean financial gain, revenge, ideological alignment, or coercion by external actors.

Common insider threat scenarios include:

- Theft of intellectual property before leaving for a competitor

- Sabotage of systems by disgruntled employees

- Data exfiltration for sale on criminal marketplaces

- Corporate espionage conducted on behalf of nation-states or competitors

- Destruction of critical data or infrastructure following termination

Insider threats are especially dangerous because the actors already understand your systems, know where sensitive data resides, and can bypass security controls. They operate with legitimate credentials, making detection difficult. A disgruntled system administrator might retain access after termination and delete databases. An engineer planning to leave might slowly copy proprietary code over weeks to avoid triggering alerts.

The sophistication of insider threats varies. Some are opportunistic and impulsive, while others are carefully planned and executed over months. In some cases, external attackers recruit insiders through bribery, blackmail, or social engineering, turning trusted employees into access points for broader attacks.

Insider Risk vs. Insider Threat: Key Differences

The distinction between insider risk and insider threat comes down to three factors: intent, impact, and organizational response.

- Intent is the primary differentiator. Insider risk includes both unintentional and intentional actions that create vulnerabilities. Insider threat involves only intentional, malicious actions designed to cause harm.

- Impact differs in likelihood and severity. Insider risks are far more common but typically result in smaller, containable incidents. A single employee's poor password hygiene might not make headlines, but it creates an opening for attackers. Insider threats are rarer but can result in catastrophic damage, such as the loss of trade secrets, operational disruption, or regulatory penalties.

- Organizational response must be tailored to each. Insider risk requires proactive education, behavioral monitoring, and policies that make secure actions the default. Insider threat demands active detection, investigation, forensic analysis, and coordinated incident response involving security, HR, and legal teams.

Treating all insider activity as equally threatening wastes resources and creates a culture of surveillance. Treating all activity as benign leaves critical gaps. Security leaders must differentiate between risk and threat to allocate resources effectively and build defenses that address both.

How Insider Risk Evolves Into Insider Threat

The path from insider risk to insider threat can be gradual. An employee begins by violating a minor policy, such as sharing credentials with a colleague. If this behavior goes unchecked, it may escalate to more serious violations, like storing sensitive files on unsecured personal devices. Over time, external pressures like financial stress, job dissatisfaction, or coercion by outside actors can push this individual from risky behavior to deliberate malicious action.

This progression can be understood as an insider kill chain. At the start, actions are benign or negligent. As behavior escalates and external factors apply pressure, intent shifts. By the time the insider is actively exfiltrating data or sabotaging systems, the threat is fully realized.

Interrupting this chain early is critical. Behavioral indicators like sudden changes in work habits, accessing data outside normal hours, or unusual download activity may signal that an insider is moving along the spectrum from risk to threat. Early intervention through training, access adjustments, or investigation can prevent harm.

Effective insider risk programs anticipate this evolution. They use behavioral analytics and contextual monitoring to assess user actions in real time, catching concerning patterns before they escalate.

See how Cyberhaven is able to detect and stop insider threats in the form of departing employees before data is exfiltrated.

Common Examples of an Insider Risk

Insider risk scenarios are often unremarkable until something goes wrong. A marketing professional uploads a file with customer contact information to a personal cloud storage account to work on a presentation at home. The employee has no malicious intent, but the action exposes sensitive data to an unauthorized environment.

A developer copies source code to a USB drive for offline access, not realizing that portable media introduces a vector for data loss or theft. A finance employee uses the same weak password across multiple accounts, making credential compromise easier. An operations manager clicks on a phishing link in an email, inadvertently granting attackers access to internal systems.

These actions occur as part of routine work and do not immediately cause harm. However, they create vulnerabilities that attackers or malicious insiders can exploit later. The common thread is the absence of malicious intent. Employees are trying to be productive and efficient, but in doing so, they unknowingly violate security policies.

Other examples include misconfiguring cloud storage permissions, using unapproved SaaS applications without IT oversight, or failing to encrypt sensitive data before sharing it externally. These behaviors highlight the importance of security awareness training, clear data handling protocols, and tools that provide contextual visibility into user actions.

Common Examples of an Insider Threat

An insider threat is best illustrated by deliberate actions taken by a trusted user to cause harm. Consider a software engineer who, after receiving a job offer from a competitor, systematically copies proprietary source code to a personal cloud account over several weeks. This individual knows the organization's policies, understands the value of the data, and takes steps to avoid detection. The action is premeditated, unauthorized, and directly harms the organization.

Another clear example involves a system administrator who is terminated for performance issues. Before leaving, this individual uses retained access credentials to delete production databases and backup files, causing significant operational disruption. The intent to sabotage is clear, and the impact is immediate.

A third scenario involves corporate espionage. An insider is recruited by a competitor or foreign government to exfiltrate sensitive business strategies, customer lists, or intellectual property. This individual may have been placed intentionally within the organization or turned after hire. They operate slowly and carefully, often using legitimate access patterns to avoid suspicion.

In all these cases, the insider threat is characterized by intent to harm, knowledge of internal systems, and the use of legitimate access to achieve malicious goals. These actors are not making mistakes. They are executing plans.

Strategies for Managing Insider Risk

Managing insider risk starts with recognizing that not every risky action stems from a malicious actor. Security teams must balance trust with verification, creating an environment where secure behavior is encouraged rather than enforced through surveillance.

- Comprehensive employee education is foundational. Users need to understand how their actions affect security and what constitutes acceptable behavior. Training should cover data handling policies, cloud application usage, remote work practices, and the risks of shadow IT. Education should be ongoing, not a one-time event.

- Visibility into user behavior is critical. Deploying tools that provide contextual insights allows security teams to detect risky actions without generating excessive noise. Solutions like data loss prevention (DLP) and insider risk platforms help identify anomalies and trends. These tools should focus on understanding the context behind actions, not just blocking them.

- Security controls must align with employee workflows. If policies are overly restrictive, employees will find workarounds that introduce even greater risk. Making secure behavior the path of least resistance reduces the likelihood of violations. For example, providing secure file-sharing tools that are easy to use discourages employees from turning to unsanctioned cloud storage.

- Insider risk management should be continuous. Regular policy reviews, updated training materials, and periodic assessments keep the program effective as the organization and threat landscape evolve. Metrics such as policy violations, data access trends, and response times help measure program effectiveness and guide resource allocation.

Responding to Insider Threats

Responding to insider threats requires speed, coordination, and clear processes. Detection begins with establishing behavioral baselines for users and identifying deviations that suggest malicious intent. Activity monitoring, anomaly detection, and forensic logging are essential capabilities.

- When a potential threat is detected, the incident response team must include security, HR, legal, and communications personnel. Early coordination ensures actions are legally sound, respect employee rights, and align with organizational policies. Investigations must be thorough but discreet to avoid tipping off the insider before evidence is secured.

- Forensic tools that log user actions, maintain audit trails, and enable detailed investigations are critical. These tools help determine intent and impact, guiding the next steps. Depending on the severity, actions may include revoking access, suspending the user, initiating disciplinary measures, or pursuing legal action.

- Response processes should be documented and practiced through tabletop exercises. This ensures that when an actual incident occurs, the team knows their roles and can act quickly. The goal is not only to stop the current threat but to learn from it and strengthen defenses against future incidents.

- Organizations should also consider implementing technical controls that limit the impact of insider threats. These include least-privilege access, data classification and labeling, multi-factor authentication, and automated alerts for high-risk actions. However, technology alone cannot stop a determined insider. Human judgment and cross-functional collaboration remain essential.

Building an Insider Risk Management Program

A mature insider risk management program integrates proactive and reactive strategies, addressing both insider risk and insider threat. The foundation starts with understanding what you are trying to protect: identify your most sensitive data, know who accesses it, and trace how it moves across your environment. Without that data visibility, the rest of the program is guesswork.

From there, the program must be cross-functional, with support from security, HR, compliance, and executive leadership. HR surfaces context around employee status and high-risk situations like terminations. Legal and compliance keep the program aligned with data privacy requirements. Executives drive cultural buy-in, making security part of how the organization operates rather than a set of restrictions layered on top of it.

A clear policy framework defines acceptable behavior, outlines consequences for violations, and promotes accountability. Policies should be specific, consistently enforced, and regularly communicated. They only work if employees understand them and see them applied fairly.

Technology provides the visibility that makes everything else actionable. Modern insider risk tools combine user behavior analytics with data lineage, so security teams understand not just what action occurred, but what data was involved, where it originated, and whether the behavior represents genuine risk. This is what separates detection from understanding. When you can follow the data, you can assess intent, not just activity, and that distinction determines how you respond.

Metrics and measurement keep the program honest. Track policy violations, data access patterns, investigation timelines, and incident outcomes. These indicators show where the program is working and where it needs to be adjusted. They also demonstrate value to senior stakeholders who need to understand the return on investment.

Insider risk management requires ongoing attention. As the organization grows, as employees adopt new tools, and as the threat landscape shifts, the program has to evolve alongside it.

Shifting from Reactive to Proactive Security With Cyberhaven

The distinction between insider risk and insider threat points to a core challenge in modern security: the threat is already inside the perimeter, and traditional tools were not built to see it clearly.

Managing insider risk requires visibility into how data actually moves. Cyberhaven tracks the full lineage of data across devices, applications, and cloud environments, giving security teams the context to assess whether a behavior represents a mistake, a policy gap, or deliberate harm. When you can follow the data, the difference between a negligent employee and a malicious one becomes something you can detect and act on, not just speculate about.

That visibility powers faster, more confident decisions across the full spectrum: coaching users before risks escalate, detecting the slow-burning patterns that signal a departing employee collecting sensitive files, and investigating incidents with the forensic detail needed to determine what happened and why.

Organizations that treat insider risk and insider threat as separate but related problems are better positioned to allocate resources effectively, build defenses that fit both, and respond before damage compounds. Cyberhaven is built to support that approach, connecting data signals to user behavior so security teams spend less time chasing alerts and more time stopping real threats.

Better understand insider risk management with Insider Risk Management: The O'Reilly® Guide to Proactive Data Security.

.avif)

.avif)